虚拟机docker kafka多集群部署

文章目录

前言

本文章记录了自己kafka学习过程

提示:以下是本篇文章正文内容,下面案例可供参考

1 docker安装

1.1 安装yum-utils

sudo yum install -y yum-utils

1.2 添加源

sudo yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

1.3 Enable the nightly or test repositories

sudo yum-config-manager --enable docker-ce-nightlysudo

yum-config-manager --enable docker-ce-test

1.4 安装最新的Docker和Containerd.io

sudo yum install https://download.docker.com/linux/fedora/30/x86_64/stable/Packages/containerd.io-1.2.6-3.3.fc30.x86_64.rpm

sudo yum install docker-ce docker-ce-cli

1.5 启动Docker

sudo systemctl start docker

1.6 配置Docker开机自启动

sudo systemctl enable docker

1.7 检测Docker版本

docker -v

1.8 安装Compose

curl -L https://github.com/docker/compose/releases/download/1.25.4/docker-compose-`uname -s`-`uname -m` -o /usr/local/bin/docker-composechmod +x /usr/local/bin/docker-compose

yum -y install epel-release

yum -y install python3-pip

1.9 Apply executable permissions to the binary(添加权限)

sudo chmod +x /usr/local/bin/docker-compose

1.10 查看版本

docker-compose --version

2 安装zookeeper及kafka镜像

2.1 查看镜像

docker search zookeeper

docker search kafka

2.2 下载镜像

docker pull zookeeper

docker pull wurstmeister/kafka

docker pull hlebalbau/kafka-manager #管理工具

2.3 创建必要文件及文件夹(docker-compose.yml同一目录下

2.3.1 kafka文件夹

mkdir kafka1

mkdir kafka2

mkdir kafka3

2.3.2 zookeeper文件夹

mkdir zookeeper1

mkdir zookeeper2

mkdir zookeeper3

2.3.3 zookeeper配置文件

mkdir zooConfig

cd zooConfig

mkdir zoo1

mkdir zoo2

mkdir zoo3

2.3.4 在zoo1,zoo2,zoo3中分别创建myid文件,并写入分别写入id数字,如zoo1中的myid中写入1

2.3.5 创建zoo配置文件zoo.cfg

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=/data

dataLogDir=/datalog

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60

#

# Be sure to read the maintenance section of the

# administrator guide before turning on autopurge.

#

# http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance

#

# The number of snapshots to retain in dataDir

autopurge.snapRetainCount=3

# Purge task interval in hours

# Set to "0" to disable auto purge feature

autopurge.purgeInterval=1

server.1= zoo1:2888:3888

server.2= zoo2:2888:3888

server.3= zoo3:2888:3888

2.4 创建网络

docker network create --driver bridge --subnet 172.23.0.0/25 --gateway 172.23.0.1 zookeeper_network

2.5 创建docker-compose.yml文件

version: '2'

services:

zoo1:

image: zookeeper # 镜像

restart: always # 重启

container_name: zoo1

hostname: zoo1

ports:

- "2181:2181"

volumes:

- "./zooConfig/zoo.cfg:/conf/zoo.cfg" # 配置

- "/mq/zookeeper1/data:/data"

- "/mq/zookeeper1/datalog:/datalog"

environment:

ZOO_MY_ID: 1 # id

ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888

networks:

default:

ipv4_address: 172.23.0.11

zoo2:

image: zookeeper

restart: always

container_name: zoo2

hostname: zoo2

ports:

- "2182:2181"

volumes:

- "./zooConfig/zoo.cfg:/conf/zoo.cfg"

- "/mq/zookeeper2/data:/data"

- "/mq/zookeeper2/datalog:/datalog"

environment:

ZOO_MY_ID: 2

ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888

networks:

default:

ipv4_address: 172.23.0.12

zoo3:

image: zookeeper

restart: always

container_name: zoo3

hostname: zoo3

ports:

- "2183:2181"

volumes:

- "./zooConfig/zoo.cfg:/conf/zoo.cfg"

- "/mq/zookeeper3/data:/data"

- "/mq/zookeeper3/datalog:/datalog"

environment:

ZOO_MY_ID: 3

ZOO_SERVERS: server.1=zoo1:2888:3888 server.2=zoo2:2888:3888 server.3=zoo3:2888:3888

networks:

default:

ipv4_address: 172.23.0.13

kafka1:

image: wurstmeister/kafka # 镜像

restart: always

container_name: kafka1

hostname: kafka1

ports:

- 9092:9092

- 9999:9999

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.135:9092 # 暴露在外的地址

KAFKA_ADVERTISED_HOST_NAME: kafka1 #

KAFKA_HOST_NAME: kafka1

KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181

KAFKA_ADVERTISED_PORT: 9092 # 暴露在外的端口

KAFKA_BROKER_ID: 0 #

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

JMX_PORT: 9999 # jmx

volumes:

- /etc/localtime:/etc/localtime

- "/mq/kafka1/logs:/kafka"

links:

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4_address: 172.23.0.14

kafka2:

image: wurstmeister/kafka

restart: always

container_name: kafka2

hostname: kafka2

ports:

- 9093:9092

- 9998:9999

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.135:9093

KAFKA_ADVERTISED_HOST_NAME: kafka2

KAFKA_HOST_NAME: kafka2

KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181

KAFKA_ADVERTISED_PORT: 9093

KAFKA_BROKER_ID: 1

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

JMX_PORT: 9999

volumes:

- /etc/localtime:/etc/localtime

- "/mq/kafka2/logs:/kafka"

links:

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4_address: 172.23.0.15

kafka3:

image: wurstmeister/kafka

restart: always

container_name: kafka3

hostname: kafka3

ports:

- 9094:9092

- 9997:9999

environment:

KAFKA_ADVERTISED_LISTENERS: PLAINTEXT://192.168.0.135:9094

KAFKA_ADVERTISED_HOST_NAME: kafka3

KAFKA_HOST_NAME: kafka3

KAFKA_ZOOKEEPER_CONNECT: zoo1:2181,zoo2:2181,zoo3:2181

KAFKA_ADVERTISED_PORT: 9094

KAFKA_BROKER_ID: 2

KAFKA_LISTENERS: PLAINTEXT://0.0.0.0:9092

JMX_PORT: 9999

volumes:

- /etc/localtime:/etc/localtime

- "/mq/kafka3/logs:/kafka"

links:

- zoo1

- zoo2

- zoo3

networks:

default:

ipv4_address: 172.23.0.16

kafka-manager:

image: hlebalbau/kafka-manager:1.3.3.22

restart: always

container_name: kafka-manager

hostname: kafka-manager

ports:

- 9000:9000

links:

- kafka1

- kafka2

- kafka3

- zoo1

- zoo2

- zoo3

environment:

ZK_HOSTS: zoo1:2181,zoo2:2181,zoo3:2181

KAFKA_BROKERS: kafka1:9092,kafka2:9093,kafka3:9094

APPLICATION_SECRET: letmein

KAFKA_MANAGER_AUTH_ENABLED: "true" # 开启验证

KAFKA_MANAGER_USERNAME: "admin" # 用户名

KAFKA_MANAGER_PASSWORD: "admin" # 密码

KM_ARGS: -Djava.net.preferIPv4Stack=true

networks:

default:

ipv4_address: 172.23.0.10

networks:

default:

external:

name: zookeeper_network

2.6 启停集群

2.6.1 启动集群

docker-compose -f docker-compose.yml up -d

2.6.2 停止集群

docker-compose -f docker-compose.yml stop

2.6.3 单个节点停止

docker rm -f zoo1

2.7 查看zookeeper集群是否正常

docker exec -it zoo1 bash

bin/zkServer.sh status # mode 为leader或follower正常

2.8 创建topic

2.8.1 验证,每个list理论上都可以看到新建的topic

遇到的问题:设置了jmx_port后,报端口被占用

在kafka创建topic之前加入unset JMX_PORT;

unset JMX_PORT;kafka-topics.sh --create --zookeeper zoo1:2181 --replication-factor 1 --partitions 3 --topic test001

2.8.2 生产消息

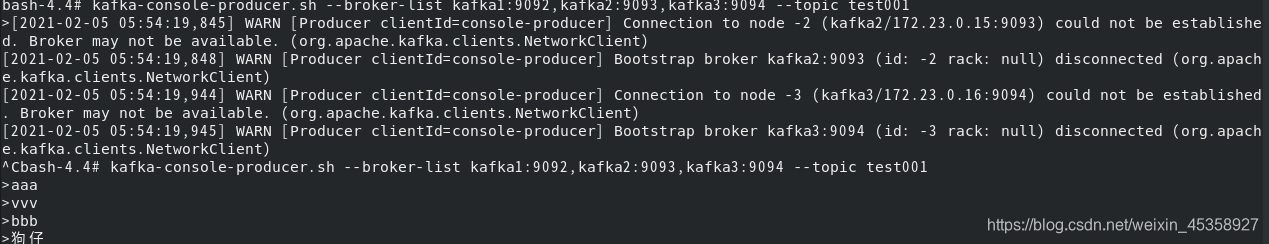

kafka-console-producer.sh --broker-list kafka1:9092,kafka2:9093,kafka3:9094 --topic test001

2.8.3 消费消息

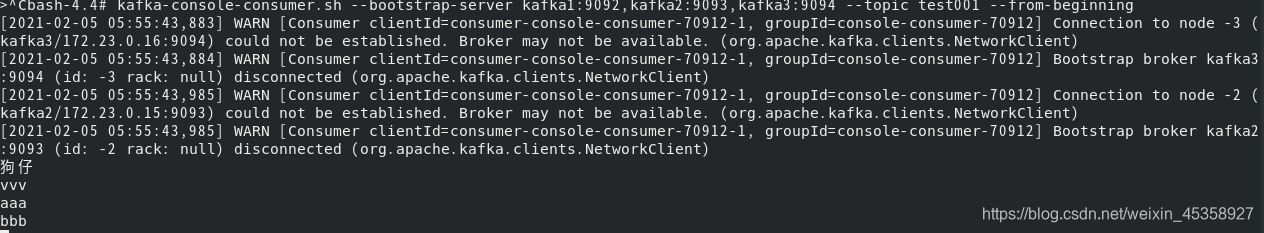

kafka-console-consumer.sh --bootstrap-server kafka1:9092,kafka2:9093,kafka3:9094 --topic test001 --from-beginning

2.9 防火墙开启相关端口

firewall-cmd --add-port=9000/tcp --permanent #开启9000端口

firewall-cmd --reload #重启防火墙

firewall-cmd --list-ports #查看已开启的端口

版权声明:本文为weixin_45358927原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。