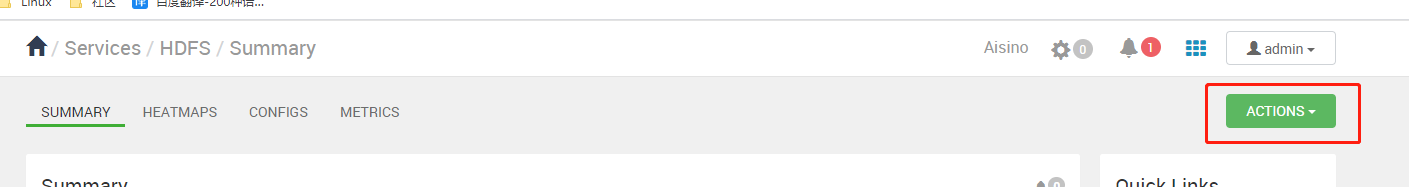

hdfs界面点击action

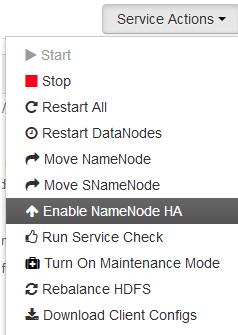

点击enable namenode HA

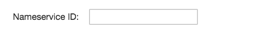

输入namespace ID

选择安装位置

检查配置

执行命令 (此处为一个坑)

安装所需组件

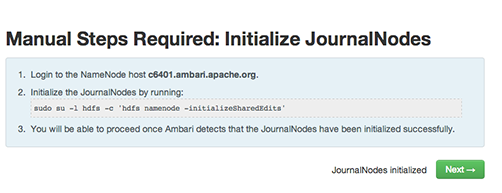

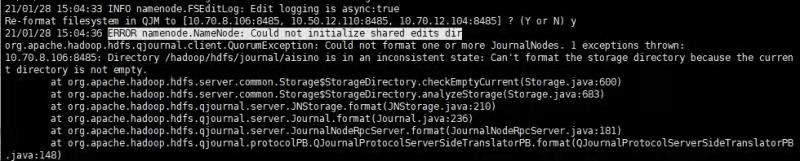

初始化JournalNodes文件夹

会有报错 Could not initialinze shared edits dir ,因为对应文件夹下不为空,查看网上解决方案为格式化namenode,我没管,直接点了next.启动主节点组件

主节点执行hdfs zkfc -formatZK 在安装另外一个namenode节点执行hdfs namenode -boostrapStandby

然后下一步开始有几点坑

坑一:新加的namenode无法启动,报错8020端口连接不上 没有active的namenode

2021-01-28 15:20:56,127 - Getting jmx metrics from NN failed. URL: http://aisino-slave01.test.com:50070/jmx?qry=Hadoop:service=NameNode,name=FSNamesystem

Traceback (most recent call last):

File "/usr/lib/ambari-agent/lib/resource_management/libraries/functions/jmx.py", line 38, in get_value_from_jmx

_, data, _ = get_user_call_output(cmd, user=run_user, quiet=False)

File "/usr/lib/ambari-agent/lib/resource_management/libraries/functions/get_user_call_output.py", line 62, in get_user_call_output

raise ExecutionFailed(err_msg, code, files_output[0], files_output[1])

ExecutionFailed: Execution of 'curl -s 'http://aisino-slave01.test.com:50070/jmx?qry=Hadoop:service=NameNode,name=FSNamesystem' 1>/tmp/tmpCiS8Ie 2>/tmp/tmpU6EWZq' returned 7.

21/01/28 15:49:40 INFO ipc.Client: Retrying connect to server: aisino-slave01.test.com/10.70.12.104:8020. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=1, sleepTime=1000 MILLISECONDS)

Operation failed: Call From aisino-slave01.test.com/10.70.12.104 to aisino-slave01.test.com:8020 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

2021-01-28 15:49:40,626 - call returned (255, '21/01/28 15:49:40 INFO ipc.Client: Retrying connect to server: aisino-slave01.test.com/10.70.12.104:8020. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=1, sleepTime=1000 MILLISECONDS)\nOperation failed: Call From aisino-slave01.test.com/10.70.12.104 to aisino-slave01.test.com:8020 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused')

2021-01-28 15:49:40,627 - NameNode HA states: active_namenodes = [], standby_namenodes = [(u'nn1', 'aisino-master.test.com:50070')], unknown_namenodes = [(u'nn2', 'aisino-slave01.test.com:50070')]

2021-01-28 15:49:40,627 - Will retry 3 time(s), caught exception: No active NameNode was found.. Sleeping for 5 sec(s)

起初还查看日志

2021-01-28 15:19:45,675 ERROR namenode.NameNode (NameNode.java:main(1715)) - Failed to start namenode.

java.io.FileNotFoundException: /hadoop/hdfs/namenode/current/VERSION (Permission denied)

at java.io.RandomAccessFile.open0(Native Method)

at java.io.RandomAccessFile.open(RandomAccessFile.java:316)

at java.io.RandomAccessFile.<init>(RandomAccessFile.java:243)

at org.apache.hadoop.hdfs.server.common.StorageInfo.readPropertiesFile(StorageInfo.java:250)

at org.apache.hadoop.hdfs.server.namenode.NNStorage.readProperties(NNStorage.java:660)

at org.apache.hadoop.hdfs.server.namenode.FSImage.recoverStorageDirs(FSImage.java:388)

at org.apache.hadoop.hdfs.server.namenode.FSImage.recoverTransitionRead(FSImage.java:227)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.loadFSImage(FSNamesystem.java:1090)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.loadFromDisk(FSNamesystem.java:714)

at org.apache.hadoop.hdfs.server.namenode.NameNode.loadNamesystem(NameNode.java:632)

at org.apache.hadoop.hdfs.server.namenode.NameNode.initialize(NameNode.java:694)

at org.apache.hadoop.hdfs.server.namenode.NameNode.<init>(NameNode.java:937)

at org.apache.hadoop.hdfs.server.namenode.NameNode.<init>(NameNode.java:910)

at org.apache.hadoop.hdfs.server.namenode.NameNode.createNameNode(NameNode.java:1643)

at org.apache.hadoop.hdfs.server.namenode.NameNode.main(NameNode.java:1710)

chown hdfs:hadoop -R /hadoop/hdfs/

解决方法

第6步坑,关闭datanode 安全模式 命令enter改为 leave,然后retry即可

坑二:ZKFAILOVERCONTROLLER 页面启动不了

Traceback (most recent call last):

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/HDFS/package/scripts/zkfc_slave.py", line 201, in <module>

ZkfcSlave().execute()

File "/usr/lib/ambari-agent/lib/resource_management/libraries/script/script.py", line 352, in execute

method(env)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/HDFS/package/scripts/zkfc_slave.py", line 75, in start

ZkfcSlaveDefault.start_static(env, upgrade_type)

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/HDFS/package/scripts/zkfc_slave.py", line 100, in start_static

create_log_dir=True

File "/var/lib/ambari-agent/cache/stacks/HDP/3.0/services/HDFS/package/scripts/utils.py", line 261, in service

Execute(daemon_cmd, not_if=process_id_exists_command, environment=hadoop_env_exports)

File "/usr/lib/ambari-agent/lib/resource_management/core/base.py", line 166, in __init__

self.env.run()

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 160, in run

self.run_action(resource, action)

File "/usr/lib/ambari-agent/lib/resource_management/core/environment.py", line 124, in run_action

provider_action()

File "/usr/lib/ambari-agent/lib/resource_management/core/providers/system.py", line 263, in action_run

returns=self.resource.returns)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 72, in inner

result = function(command, **kwargs)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 102, in checked_call

tries=tries, try_sleep=try_sleep, timeout_kill_strategy=timeout_kill_strategy, returns=returns)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 150, in _call_wrapper

result = _call(command, **kwargs_copy)

File "/usr/lib/ambari-agent/lib/resource_management/core/shell.py", line 314, in _call

raise ExecutionFailed(err_msg, code, out, err)

resource_management.core.exceptions.ExecutionFailed: Execution of 'ambari-sudo.sh su hdfs -l -s /bin/bash -c 'ulimit -c unlimited ; /usr/hdp/3.1.4.0-315/hadoop/bin/hdfs --config /usr/hdp/3.1.4.0-315/hadoop/conf --daemon start zkfc'' returned 1.

解决办法:

部署zkfc 节点执行

hdfs --daemon stop zkfc

zkfc已启动 但是它好像识别不了不是页面启动的,然后retry

最后等待

版权声明:本文为weixin_41772761原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。