一、资源准备 (所有机器上执行)

| 主机名 | 公网 IP | 私网 IP |

|---|---|---|

| k8s-master01 | 39.104.173.77 | 172.24.114.3 |

| k8s-node01 | 39.104.179.210 | 172.24.114.4 |

| k8s-node02 | 39.104.173.12 | 172.24.114.1 |

| k8s-node03 | 39.104.177.2 | 172.24.114.2 |

- 更改主机名

# 在虚拟机 172.24.114.3 上,设置 k8s-master01 节点

hostnamectl set-hostname k8s-master01

bash #立马生效

# 在虚拟机 172.24.114.4 上,设置 k8s-node01 节点

hostnamectl set-hostname k8s-node01

bash #立马生效

# 在虚拟机 172.24.114.1 上,设置 k8s-node02 节点

hostnamectl set-hostname k8s-node02

bash #立马生效

# 在虚拟机 172.24.114.2 上,设置 k8s-node03 节点

hostnamectl set-hostname k8s-node03

bash #立马生效

- 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

- 允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOF

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system

- 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

- 关闭swap(k8s禁止虚拟内存提供性能)

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab #关闭swap分区

- 配置/etc/hosts

# 自定义master与node IP,请根据个人情况修改

cat >> /etc/hosts << EOF

172.28.12.148 master1

172.28.12.149 node1

EOF

6.1 安装docker

#清理过往版本docker

sudo yum remove docker \

docker-client \

docker-client-latest \

docker-common \

docker-latest \

docker-latest-logrotate \

docker-logrotate \

docker-engine

#安装docker

sudo yum install -y yum-utils

sudo yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

sudo yum makecache fast

sudo yum install docker-ce docker-ce-cli containerd.io -y

sudo systemctl start docker

sudo systemctl enable docker

sudo systemctl status docker

6.2 修改docker驱动

执行kubeadm init集群初始化时遇到:

[WARNING IsDockerSystemdCheck]: detected “cgroupfs” as the Docker

cgroup driver. The recommended driver is “systemd”.[警告IsDockerSystemdCheck]:检测到“cgroupfs”作为Docker cgroup驱动程序。

推荐的驱动程序是“systemd”

#新增配置文件

cat >> /etc/docker/daemon.json << EOF

{

"exec-opts":["native.cgroupdriver=systemd"]

}

EOF

#重启docker

systemctl restart docker

systemctl status docker

- 配置阿里云kubernetes软件源

报错:[Errno -1] repomd.xml signature could not be verified for kubernetes Trying other mirror.

解决:https://github.com/kubernetes/kubernetes/issues/60134

处理:repo_gpgcheck=0

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

#repo_gpgcheck=1

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

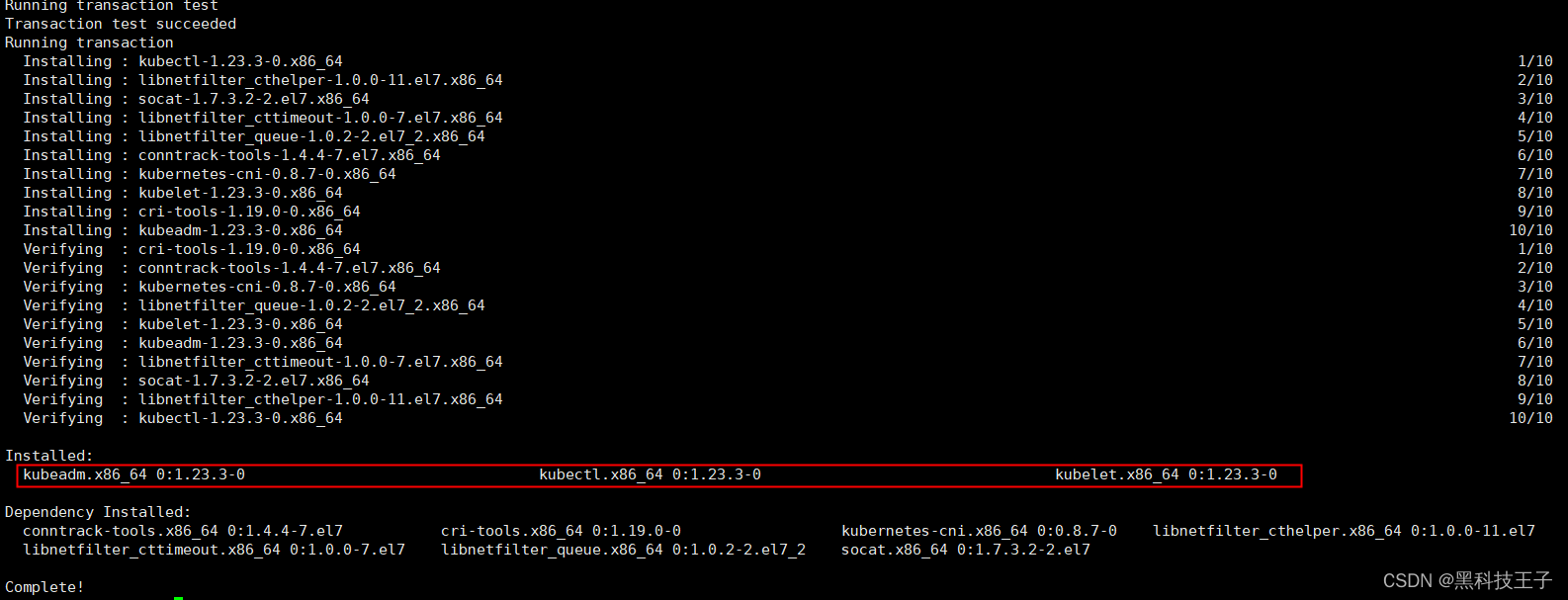

- 安装kubelet kubeadm kubectl

sudo yum update -y #针对修改repo_gpgcheck=0

# sudo yum install -y kubelet-1.19.4 kubeadm-1.19.4 kubectl-1.19.4 #可以根据github发布版本,指定

# sudo yum install -y kubelet-1.20.0 kubeadm-1.20.0 kubectl-1.20.0 #可以根据github发布版本,指定

sudo yum install -y kubelet kubeadm kubectl #最好不要指定版本,默认更新为最新

sudo systemctl enable --now kubelet

sudo systemctl start kubelet

#sudo systemctl status kubelet 此时kubelet还没有正常准备,待kubeadm init后master节点会ok,将node节点join添加后kubelet也会正常

- 检查工具安装

yum list installed | grep kubelet

yum list installed | grep kubeadm

yum list installed | grep kubectl

kubelet --version #查看集群版本结果 Kubernetes v1.23.3

二、kubeadm创建集群

kubeadm初始化集群 #在master上执行

切记修改为master的IP地址, --apiserver-advertise-address 172.24.114.3

#apiserver-advertise-address 172.28.12.148为master节点IP,根据个人master IP修改

kubeadm init --apiserver-advertise-address 172.24.114.3 \

--image-repository registry.aliyuncs.com/google_containers \

--pod-network-cidr 10.244.0.0/16 \

--service-cidr 10.96.0.0/12

#--kubernetes-version v1.23.3 \ #本行,可以不添加,默认使用最新的版本

- 此处如果执行失败,可能master的IP填写错误或者未填写 --apiserver-advertise-address

172.24.114.3

kubeadm reset

- 然后输入:y

- 已完成 kubeadm init 重置,重新执行以上命令kubeadm init …

#客户端kubectl接入集群

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

注意:在worker节点上执行

#worker节点添加到集群 ---> 在worker节点node1上执行

#自动生成,请保留

kubeadm join 172.24.114.3:6443 --token bofh8w.5r6qwmvargj3d0do \

--discovery-token-ca-cert-hash sha256:0724d03bf5ca008808b4dc9c68643c90e54d36733a487dc7d73`在这里插入代码片`0dca35a952b89

[root@iZ0jlhvtxignmaozy30vffZ ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

iz0jlhvtxignmaozy30vffz NotReady master 8m19s v1.19.4

iz0jlhvtxignmaozy30vfgz NotReady <none> 21s v1.19.4

- 添加pod网络 flannel --> master节点执行

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

- 若上面网址较慢或无反应,请复制一下内容放置 kube-flannel.yml

- cat kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

#image: flannelcni/flannel-cni-plugin:v1.0.1 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.0.1

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

#image: flannelcni/flannel:v0.16.3 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel:v0.16.3

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

#image: flannelcni/flannel:v0.16.3 for ppc64le and mips64le (dockerhub limitations may apply)

image: rancher/mirrored-flannelcni-flannel:v0.16.3

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

- name: xtables-lock

mountPath: /run/xtables.lock

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

- name: xtables-lock

hostPath:

path: /run/xtables.lock

type: FileOrCreate

kubectl apply -f ./kube-flannel.yml #此处应用后node状态由NotReady --> Ready

12.控制面master1查看集群

[root@iZ0jlhvtxignmaozy30vffZ ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

iz0jlhvtxignmaozy30vffz Ready master 11m v1.19.4

iz0jlhvtxignmaozy30vfgz Ready <none> 3m14s v1.19.4

[root@iZ0jlhvtxignmaozy30vffZ ~]# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-6d56c8448f-n7f9k 1/1 Running 0 20m

kube-system coredns-6d56c8448f-tz6m4 1/1 Running 0 20m

kube-system etcd-iz0jlhvtxignmaozy30vffz 1/1 Running 0 20m

kube-system kube-apiserver-iz0jlhvtxignmaozy30vffz 1/1 Running 0 20m

kube-system kube-controller-manager-iz0jlhvtxignmaozy30vffz 1/1 Running 0 20m

kube-system kube-flannel-ds-7j7jn 1/1 Running 0 9m51s

kube-system kube-flannel-ds-fdkbv 1/1 Running 0 9m51s

kube-system kube-proxy-fdz49 1/1 Running 0 12m

kube-system kube-proxy-kgzcp 1/1 Running 0 20m

kube-system kube-scheduler-iz0jlhvtxignmaozy30vffz 1/1 Running 0 20m

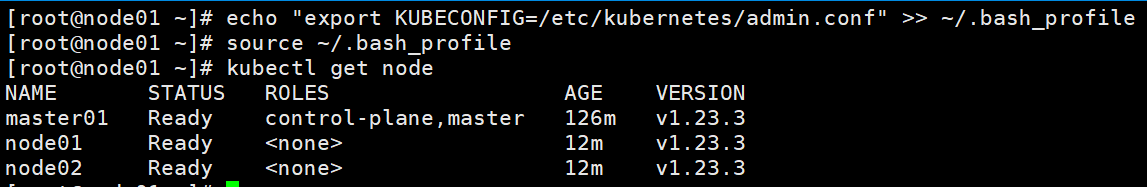

13.工作负载worker节点(k8s-node01、k8s-node02、k8s-node03 配置admin.conf,实现worker节点 kubectl get …)

# k8s-master01 传文件到k8s-node01、k8s-node02、k8s-node03

# 其中172.24.114.4为 k8s-node01 节点

scp -r /etc/kubernetes/admin.conf root@172.24.114.4:/etc/kubernetes/

# 其中172.24.114.1为 k8s-node02 节点

scp -r /etc/kubernetes/admin.conf root@172.24.114.1:/etc/kubernetes/

# 其中172.24.114.2为 k8s-node03 节点

scp -r /etc/kubernetes/admin.conf root@172.24.114.2:/etc/kubernetes/

# 在k8s-node01、k8s-node02、k8s-node03 执行

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile

三、新增work节点

14、请按顺序执行0-9步骤

15、新worker节点添加到集群

#在新worker节点上

kubeadm join --token <token> --discovery-token-ca-cert-hash sha256:<hash>

kubeadm token list #集群创建在24小时以内,获取<token>

或者

kubeadm token create #集群创超过24小时,获取<token>

openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | \

openssl dgst -sha256 -hex | sed 's/^.* //' #获取<hash>

16、新节点配置kubectl客户端工具

请执行第13步骤

四、安装 NFS

| 主机名 | 公网 IP | 私网 IP |

|---|---|---|

| k8s-master01 | 39.104.173.77 | 172.24.114.3 |

| k8s-node01 | 39.104.179.210 | 172.24.114.4 |

| k8s-node02 | 39.104.173.12 | 172.24.114.1 |

| k8s-node03 | 39.104.177.2 | 172.24.114.2 |

4.0、搭建 NFS (选择 k8s-master01 172.24.114.3 )

server: 172.24.114.3

path: /data/redis

4.1 在提供 NFS 存储主机上执行,这里默认k8s-master01节点

yum install -y nfs-utils #这条命令所有节点master、worker都执行

echo "/data/harbor *(insecure,rw,sync,no_root_squash)" > /etc/exports

# 执行以下命令,启动 nfs 服务;创建共享目录

mkdir -p /data/redis

# 在master执行

chmod -R 777 /data/redis

# 使配置生效

exportfs -r

#检查配置是否生效

exportfs

systemctl enable rpcbind && systemctl start rpcbind

systemctl enable nfs && systemctl start nfs

4.2 在worker主机上执行(k8s-node01 k8s-node02 k8s-node03)

yum install -y nfs-utils #这条命令所有节点master、worker都执行

showmount -e 172.24.114.3 #查看worker节点是否能查到master节点的nfs文件

五、配置 StorageClass 存储

vim redis-storage.yaml

## 创建了一个存储类

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: nfs-storage

annotations:

storageclass.kubernetes.io/is-default-class: "true"

provisioner: redis-provisioner #Deployment中spec.template.spec.containers.env.name.PROVISIONER_NAME 保持一致

parameters:

archiveOnDelete: "true" ## 删除pv的时候,pv的内容是否要备份

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: nfs-client-provisioner

labels:

app: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

selector:

matchLabels:

app: nfs-client-provisioner

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccountName: nfs-client-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2

# resources:

# limits:

# cpu: 10m

# requests:

# cpu: 10m

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: redis-provisioner

- name: NFS_SERVER

value: 172.24.114.3 ## 指定自己nfs服务器地址

- name: NFS_PATH

value: /data/redis ## nfs服务器共享的目录

volumes:

- name: nfs-client-root

nfs:

server: 172.24.114.3

path: /data/redis

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: nfs-client-provisioner-runner

rules:

- apiGroups: [""]

resources: ["nodes"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-client-provisioner

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: ClusterRole

name: nfs-client-provisioner-runner

apiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

rules:

- apiGroups: [""]

resources: ["endpoints"]

verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: leader-locking-nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

subjects:

- kind: ServiceAccount

name: nfs-client-provisioner

# replace with namespace where provisioner is deployed

namespace: default

roleRef:

kind: Role

name: leader-locking-nfs-client-provisioner

apiGroup: rbac.authorization.k8s.io

kubectl apply -f redis-storage.yaml

六、安装 helm

6.1 安装包的下载地址:https://github.com/helm/helm/releases

6.2 下载软件包:helm-v3.6.3-linux-amd64.tar.gz,如下二选一

wget https://get.helm.sh/helm-v3.6.3-linux-amd64.tar.gz

# curl -L https://get.helm.sh/helm-v3.6.3-linux-amd64.tar.gz -o helm-v3.6.3-linux-amd64.tar.gz

6.3 解压安装包

[root@k8s-master01 ~]# tar -zxvf helm-v3.6.3-linux-amd64.tar.gz

[root@k8s-master01~]# cd linux-amd64/

[root@k8s-master01 linux-amd64]# ls

helm LICENSE README.md

[root@server1 linux-amd64]# cp helm /usr/local/bin/

[root@k8s-master01 ~]# helm version

version.BuildInfo{Version:"v3.6.2", GitCommit:"ee407bdf364942bcb8e8c665f82e15aa28009b71", GitTreeState:"clean", GoVersion:"go1.16.5"}

七、helm 安装 redis-ha 高可用 redis 集群

7.1 查找官方helm hub chart库:

[root@k8s-master01 ~]# helm search hub redis

URL CHART VERSION APP VERSION DESCRIPTION

https://artifacthub.io/packages/helm/choerodon/... 16.4.1 6.2.6 Redis(TM) is an open source, advanced key-value...

https://artifacthub.io/packages/helm/wenerme/redis 16.8.5 6.2.6 Redis(TM) is an open source, advanced key-value...

https://artifacthub.io/packages/helm/bitnami-ak... 16.8.2 6.2.6 Redis(TM) is an open source, advanced key-value...

https://artifacthub.io/packages/helm/wener/redis 16.8.5 6.2.6 Redis(TM) is an open source, advanced key-value...

https://artifacthub.io/packages/helm/pascaliske... 0.0.3 6.2.6 A Helm chart for Redis

https://artifacthub.io/packages/helm/bitnami/redis 16.8.5 6.2.6 Redis(TM) is an open source, advanced key-value...

https://artifacthub.io/packages/helm/proto-appl... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/authorizat... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/truecharts... 2.0.38 6.2.6 Open source, advanced key-value store.

https://artifacthub.io/packages/helm/cloudnativ... 8.0.1 5.0.5 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/drycc-cana... 1.0.0 A Redis database for use inside a Kubernetes cl...

https://artifacthub.io/packages/helm/riftbit/redis 15.4.0 6.2.5 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/taalhuizen... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/jfrog/redis 12.10.1 6.0.12 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/mmontes/redis 0.1.0 1.16.0 Redis with metrics compatible with ARM

https://artifacthub.io/packages/helm/agendaserv... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/conduction... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/contacten-... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/appuio/redis 1.3.4 6.2.1 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/openstack-... 0.1.1 v4.0.1 OpenStack-Helm Redis

https://artifacthub.io/packages/helm/kubesphere... 0.3.5 6.0.9 Redis is an open source (BSD licensed), in-memo...

https://artifacthub.io/packages/helm/groundhog2... 0.4.11 6.2.6 A Helm chart for Redis on Kubernetes

https://artifacthub.io/packages/helm/drycc/redis 1.2.0 A Redis database for use inside a Kubernetes cl...

https://artifacthub.io/packages/helm/notificati... 12.7.7 6.0.11 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/redis-char... 0.1.0 v0.2.0 A Helm chart for redis-cart service

https://artifacthub.io/packages/helm/cloudnativ... 0.4.1 4.0.12-alpine A pure in-memory redis cache, using statefulset...

https://artifacthub.io/packages/helm/bitnami/re... 7.4.6 6.2.6 Redis(TM) is an open source, scalable, distribu...

https://artifacthub.io/packages/helm/bitnami-ak... 7.4.5 6.2.6 Redis(TM) is an open source, scalable, distribu...

https://artifacthub.io/packages/helm/inspur/red... 0.0.2 5.0.6 Highly available Kubernetes implementation of R...

https://artifacthub.io/packages/helm/kubesphere... 3.4.6 1.3.4 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/dandydev-c... 4.15.0 6.2.5 This Helm chart provides a highly available Red...

https://artifacthub.io/packages/helm/cloudnativ... 3.4.2 5.0.3 Highly available Kubernetes implementation of R...

https://artifacthub.io/packages/helm/hkube/redi... 3.6.1005 5.0.5 Highly available Kubernetes implementation of R...

https://artifacthub.io/packages/helm/kfirfer/re... 4.12.9 6.0.11 This Helm chart provides a highly available Red...

https://artifacthub.io/packages/helm/aigisuk/re... 0.1.8 1.7.4.2-r0 A lightweight redis proxy deployment/daemonset ...

https://artifacthub.io/packages/helm/softonic/r... 0.3.0 6.0.6 A Helm chart for sharded redis

https://artifacthub.io/packages/helm/riftbit/re... 6.3.7 6.2.5 Open source, advanced key-value store. It is of...

https://artifacthub.io/packages/helm/kfirfer/re... 0.1.2 latest A Helm chart for redis-commander

https://artifacthub.io/packages/helm/tyk-helm/s... 0.1.1 A Simple Helm chart for running redis for CI

https://artifacthub.io/packages/helm/prometheus... 4.6.0 1.27.0 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/wener/prom... 4.6.0 1.27.0 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/cloudnativ... 1.0.2 0.28.0 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/prometheus... 4.0.0 1.11.1 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/wenerme/pr... 4.6.0 1.27.0 Prometheus exporter for Redis metrics

https://artifacthub.io/packages/helm/hmdmph/red... 1.0.2 1.0.0 Labelling redis pods as master/slave periodical...

https://artifacthub.io/packages/helm/wyrihaximu... 1.0.5 v1.0.1 Redis Database Assignment Operator

https://artifacthub.io/packages/helm/appscode/k... 2021.10.29 v0.4.0 Kubeform Provider Azurerm Redis Custom Resource...

https://artifacthub.io/packages/helm/appscode/k... 2021.10.29 v0.4.0 Kubeform Provider Google Redis Custom Resource ...

https://artifacthub.io/packages/helm/riftbit/re... 0.1.0 v1.0.0 RedisInsight - The GUI for Redis

https://artifacthub.io/packages/helm/enapter/keydb 0.34.0 6.2.2 A Helm chart for KeyDB multimaster setup

https://artifacthub.io/packages/helm/pozetron/k... 0.5.3 v6.0.16 A Helm chart for multimaster KeyDB optionally w...

https://artifacthub.io/packages/helm/riftbit/lo... 2.5.1 v2.1.0 Loki: like Prometheus, but for logs.

https://artifacthub.io/packages/helm/ananace-ch... 4.1.0 3.1.0 An IP address management (IPAM) and data center...

https://artifacthub.io/packages/helm/oauth2-pro... 6.2.0 7.2.0 A reverse proxy that provides authentication wi...

https://artifacthub.io/packages/helm/sossickd/p... 0.1.0 5.0.0 A Helm chart for the php guest book.

https://artifacthub.io/packages/helm/camptocamp... 3.0.1 A Helm chart for the Spotahome Redis Operator

https://artifacthub.io/packages/helm/ectobit/rs... 0.8.13 3.1-alpine3.15.3 Rspamd Helm chart for Kubernetes

https://artifacthub.io/packages/helm/spring-res... 0.1.0 1.16.0 A SpringBoot Helm chart for Kubernetes

[root@k8s-master01 ~]# helm search hub redis|grep -i stable

[root@k8s-master01 ~]#

7.2 Helm 添加第三方 Chart 库:

[root@k8s-master01 ~]# helm repo add stable http://mirror.azure.cn/kubernetes/charts/

"stable" already exists with the same configuration, skipping

[root@k8s-master01 ~]# helm repo list

NAME URL

bitnami https://charts.bitnami.com/bitnami

dandydev https://dandydeveloper.github.io/charts

stable http://mirror.azure.cn/kubernetes/charts/

7.3 添加第三库之后就可以使用以下方式查询:

[root@k8s-master01 ~]# helm search repo redis

NAME CHART VERSION APP VERSION DESCRIPTION

bitnami/redis 16.8.5 6.2.6 Redis(TM) is an open source, advanced key-value...

bitnami/redis-cluster 7.4.6 6.2.6 Redis(TM) is an open source, scalable, distribu...

dandydev/redis-ha 4.15.0 6.2.5 This Helm chart provides a highly available Red...

stable/prometheus-redis-exporter 3.5.1 1.3.4 DEPRECATED Prometheus exporter for Redis metrics

stable/redis 10.5.7 5.0.7 DEPRECATED Open source, advanced key-value stor...

stable/redis-ha 4.4.6 5.0.6 DEPRECATED - Highly available Kubernetes implem...

stable/sensu 0.2.5 0.28 DEPRECATED Sensu monitoring framework backed by...

7.4 拉取并修改 redis-ha 安装包

- 拉取 redis-ha 安装包,到本地

[root@k8s-master01 linux-amd64]# helm pull stable/redis-ha

[root@k8s-master01 linux-amd64]# ll

total 44088

-rwxr-xr-x 1 root root 45109248 Apr 19 13:10 helm

-rw-r--r-- 1 3434 3434 11373 Jun 29 2021 LICENSE

-rw-r--r-- 1 3434 3434 3367 Jun 29 2021 README.md

-rw-r--r-- 1 root root 17546 Apr 19 13:10 redis-ha-4.4.6.tgz

- 解压 redis-ha 安装包

[root@k8s-master01 linux-amd64]# tar -xvf redis-ha-4.4.6.tgz

redis-ha/Chart.yaml

redis-ha/values.yaml

redis-ha/templates/NOTES.txt

redis-ha/templates/_configs.tpl

redis-ha/templates/_helpers.tpl

redis-ha/templates/redis-auth-secret.yaml

redis-ha/templates/redis-ha-announce-service.yaml

redis-ha/templates/redis-ha-configmap.yaml

redis-ha/templates/redis-ha-exporter-script-configmap.yaml

redis-ha/templates/redis-ha-pdb.yaml

redis-ha/templates/redis-ha-role.yaml

redis-ha/templates/redis-ha-rolebinding.yaml

redis-ha/templates/redis-ha-service.yaml

redis-ha/templates/redis-ha-serviceaccount.yaml

redis-ha/templates/redis-ha-servicemonitor.yaml

redis-ha/templates/redis-ha-statefulset.yaml

redis-ha/templates/redis-haproxy-deployment.yaml

redis-ha/templates/redis-haproxy-service.yaml

redis-ha/templates/redis-haproxy-serviceaccount.yaml

redis-ha/templates/redis-haproxy-servicemonitor.yaml

redis-ha/templates/tests/test-redis-ha-configmap.yaml

redis-ha/templates/tests/test-redis-ha-pod.yaml

redis-ha/README.md

redis-ha/ci/haproxy-enabled-values.yaml

- 进入 redis-ha 安装包

[root@k8s-master01 linux-amd64]# ll

total 44092

-rwxr-xr-x 1 root root 45109248 Apr 19 13:10 helm

-rw-r--r-- 1 3434 3434 11373 Jun 29 2021 LICENSE

-rw-r--r-- 1 3434 3434 3367 Jun 29 2021 README.md

drwxr-xr-x 4 root root 4096 Apr 19 13:11 redis-ha

-rw-r--r-- 1 root root 17546 Apr 19 13:10 redis-ha-4.4.6.tgz

[root@k8s-master01 linux-amd64]# cd redis-ha

[root@k8s-master01 redis-ha]# ll

total 64

-rwxr-xr-x 1 root root 507 Nov 14 2020 Chart.yaml

drwxr-xr-x 2 root root 4096 Apr 19 13:11 ci

-rwxr-xr-x 1 root root 38240 Nov 14 2020 README.md

drwxr-xr-x 3 root root 4096 Apr 19 13:11 templates

-rwxr-xr-x 1 root root 11632 Nov 14 2020 values.yaml

[root@k8s-master01 redis-ha]#

上述文件中README.md 为帮助文档,目录templates中为模板部署文件,部署文件中的变量都保存在values.yaml文件中,在部署应用时只需要更改values.yaml文件即可

- 查看 redis-ha 安装包的目录结构

yum install -y tree

[root@k8s-master01 redis-ha]# tree .

.

├── Chart.yaml

├── ci

│ └── haproxy-enabled-values.yaml

├── README.md

├── templates

│ ├── _configs.tpl

│ ├── _helpers.tpl

│ ├── NOTES.txt

│ ├── redis-auth-secret.yaml

│ ├── redis-ha-announce-service.yaml

│ ├── redis-ha-configmap.yaml

│ ├── redis-ha-exporter-script-configmap.yaml

│ ├── redis-ha-pdb.yaml

│ ├── redis-haproxy-deployment.yaml

│ ├── redis-haproxy-serviceaccount.yaml

│ ├── redis-haproxy-servicemonitor.yaml

│ ├── redis-haproxy-service.yaml

│ ├── redis-ha-rolebinding.yaml

│ ├── redis-ha-role.yaml

│ ├── redis-ha-serviceaccount.yaml

│ ├── redis-ha-servicemonitor.yaml

│ ├── redis-ha-service.yaml

│ ├── redis-ha-statefulset.yaml

│ └── tests

│ ├── test-redis-ha-configmap.yaml

│ └── test-redis-ha-pod.yaml

└── values.yaml

3 directories, 24 files

- 修改 values.yaml,主要修改以下内容:

a. 修改 “hardAntiAffinity: true” 为 “hardAntiAffinity: false” (仅限当replicas > worker node 节点修改)

b. 修改 “auth: false” 为 “auth: true”,打开 “# redisPassword:” 的注释并设置密码

c. 打开 “ # storageClass: “-” ” 的注释,并修改 “-” 为 集群中的自动供给卷 “nfs-storage”, 配置中 “size: 10Gi” 的大小为默认设置,可根据需要进行调整

7.5 部署 redis-ha 安装包

- 两种方式,任选一(建议选第二种)

[root@k8s-master01 redis-ha]# pwd

/root/linux-amd64/redis-ha

[root@k8s-master01 redis-ha]# ll

total 64

-rwxr-xr-x 1 root root 507 Nov 14 2020 Chart.yaml

drwxr-xr-x 2 root root 4096 Apr 19 13:11 ci

-rwxr-xr-x 1 root root 38240 Nov 14 2020 README.md

drwxr-xr-x 3 root root 4096 Apr 19 13:11 templates

-rwxr-xr-x 1 root root 11632 Nov 14 2020 values.yaml

[root@k8s-master01 redis-ha]# helm package ./

Successfully packaged chart and saved it to: /root/linux-amd64/redis-ha/redis-ha-4.4.6.tgz

[root@k8s-master01 redis-ha]# ll

total 84

-rwxr-xr-x 1 root root 507 Nov 14 2020 Chart.yaml

drwxr-xr-x 2 root root 4096 Apr 19 13:11 ci

-rwxr-xr-x 1 root root 38240 Nov 14 2020 README.md

-rw-r--r-- 1 root root 17537 Apr 19 13:29 redis-ha-4.4.6.tgz

drwxr-xr-x 3 root root 4096 Apr 19 13:11 templates

-rwxr-xr-x 1 root root 11632 Nov 14 2020 values.yaml

[root@k8s-master01 redis-ha]# helm install redis-ha-4.4.6.tgz --namespace=redis --generate-name #这里随机生成名字

- 推荐方式如下

[root@k8s-master01 linux-amd64]# pwd

/root/linux-amd64

[root@k8s-master01 linux-amd64]# ll

total 44072

-rwxr-xr-x 1 root root 45109248 Apr 19 13:10 helm

-rw-r--r-- 1 3434 3434 11373 Jun 29 2021 LICENSE

-rw-r--r-- 1 3434 3434 3367 Jun 29 2021 README.md

drwxr-xr-x 4 root root 4096 Apr 19 13:29 redis-ha

[root@k8s-master01 linux-amd64]# kubectl create namespace redis

[root@k8s-master01 linux-amd64]# helm install redis ./redis-ha -n redis #这里指定固定名字

WARNING: This chart is deprecated

NAME: redis

LAST DEPLOYED: Mon Apr 18 23:15:45 2022

NAMESPACE: redis

STATUS: deployed

REVISION: 1

NOTES:

Redis can be accessed via port 6379 and Sentinel can be accessed via port 26379 on the following DNS name from within your cluster:

redis-redis-ha.redis.svc.cluster.local

To connect to your Redis server:

1. To retrieve the redis password:

echo $(kubectl get secret redis-redis-ha -o "jsonpath={.data['auth']}" | base64 --decode)

2. Connect to the Redis master pod that you can use as a client. By default the redis-redis-ha-server-0 pod is configured as the master:

kubectl exec -it redis-redis-ha-server-0 sh -n redis

3. Connect using the Redis CLI (inside container):

redis-cli -a <REDIS-PASS-FROM-SECRET>

7.6 redis-ha 部署状态

[root@k8s-master01 linux-amd64]# kubectl get all -n redis

NAME READY STATUS RESTARTS AGE

pod/redis-redis-ha-server-0 2/2 Running 2 (13h ago) 14h

pod/redis-redis-ha-server-1 2/2 Running 2 (13h ago) 14h

pod/redis-redis-ha-server-2 2/2 Running 0 14h

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/redis-redis-ha ClusterIP None <none> 6379/TCP,26379/TCP 14h

service/redis-redis-ha-announce-0 ClusterIP 10.105.37.67 <none> 6379/TCP,26379/TCP 14h

service/redis-redis-ha-announce-1 ClusterIP 10.100.232.102 <none> 6379/TCP,26379/TCP 14h

service/redis-redis-ha-announce-2 ClusterIP 10.103.83.23 <none> 6379/TCP,26379/TCP 14h

NAME READY AGE

statefulset.apps/redis-redis-ha-server 3/3 14h

7.7 验证 redis-ha

[root@k8s-master01 linux-amd64]# kubectl exec -it redis-redis-ha-server-0 -n redis sh

/data $ nslookup redis-redis-ha.redis.svc.cluster.local

Name: redis-redis-ha.redis.svc.cluster.local

Address 1: 10.244.3.9 redis-redis-ha-server-1.redis-redis-ha.redis.svc.cluster.local

Address 2: 10.244.1.11 redis-redis-ha-server-0.redis-redis-ha.redis.svc.cluster.local

Address 3: 10.244.2.6 10-244-2-6.redis-redis-ha-announce-2.redis.svc.cluster.local

/data $ redis-cli -h redis-redis-ha.redis.svc.cluster.local -p 6379 # 登录redis

redis-redis-ha.redis.svc.cluster.local:6379> auth myredis # myredis为values.yaml中设置密码

OK

redis-redis-ha.redis.svc.cluster.local:6379> info replication #查看主从情况,该节点为 master

# Replication

role:master

connected_slaves:2

min_slaves_good_slaves:2

slave0:ip=10.103.83.23,port=6379,state=online,offset=10129791,lag=0

slave1:ip=10.100.232.102,port=6379,state=online,offset=10129652,lag=1

master_replid:a5c77d36ca291f8444a384d101d6d302e3e85475

master_replid2:1aa623df64d623c10e83ad5cdc1195f954962992

master_repl_offset:10129791

second_repl_offset:7314

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:9081216

repl_backlog_histlen:1048576

# 模拟宕机,验证 redis-ha 是不是主从节点切换

redis-redis-ha.redis.svc.cluster.local:6379> SHUTDOWN

[root@k8s-node01 ~]# kubectl get pod -n redis

NAME READY STATUS RESTARTS AGE

redis-redis-ha-server-0 1/2 CrashLoopBackOff 3 (21s ago) 14h

redis-redis-ha-server-1 2/2 Running 2 (13h ago) 14h

redis-redis-ha-server-2 2/2 Running 0 14h

[root@k8s-node01 ~]# kubectl exec -it redis-redis-ha-server-1 -n redis sh

kubectl exec [POD] [COMMAND] is DEPRECATED and will be removed in a future version. Use kubectl exec [POD] -- [COMMAND] instead.

Defaulted container "redis" out of: redis, sentinel, config-init (init)

/data $ redis-cli

127.0.0.1:6379> auth myredis

OK

127.0.0.1:6379> info replication

# Replication

role:master

connected_slaves:2

min_slaves_good_slaves:2

slave0:ip=10.103.83.23,port=6379,state=online,offset=15803,lag=0

slave1:ip=10.105.37.67,port=6379,state=online,offset=15803,lag=1

master_replid:6022d3b93fd6179eb510973563b15b09e26da302

master_replid2:bf21b773407268b7f678e60455082cf397f336ae

master_repl_offset:15803

second_repl_offset:7631

repl_backlog_active:1

repl_backlog_size:1048576

repl_backlog_first_byte_offset:1281

repl_backlog_histlen:14523

127.0.0.1:6379>

这里可以看出 redis 的主节点,由 redis-redis-ha-server-0 转移到 redis-redis-ha-server-1,安装成功!