前置准备

CentOS7、jdk1.8、hadoop-2.7.7、zookeeper-3.5.7

想要完成本期视频中所有操作,需要以下准备:

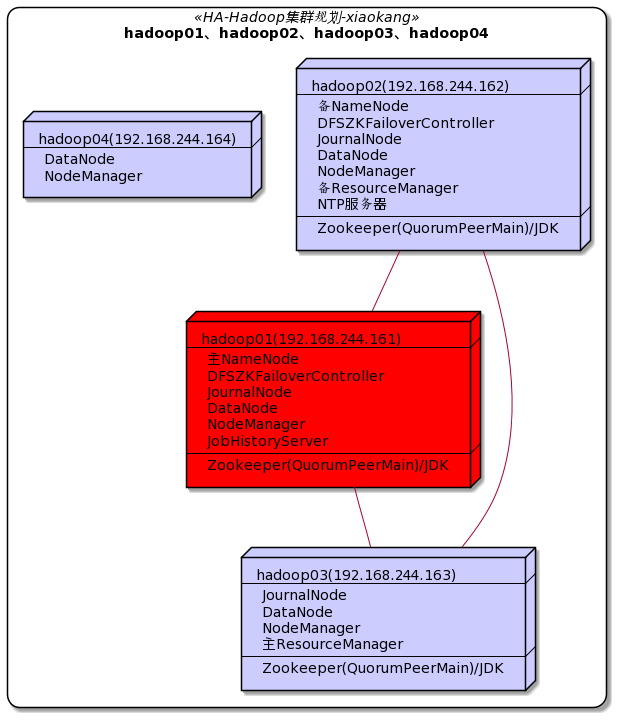

一、集群规划

下面的步骤我们将实现把hadoop04动态上线,再将hadoop04动态下线

二、启动现有集群

[xiaokang@hadoop01 ~]$ ha-hadoop.sh start

三、服役新节点(动态上线节点)

3.1 创建新的节点(实际场景下机器已备好)

克隆一个新节点,然后务必将之前集群的数据删除(配置文件中配置的存放数据的目录)。自定义用户、固定IP、主机名、IP与主机映射、自身免密登陆、防火墙都搞好。

[xiaokang@hadoop04 ~]$ rm -rf /opt/software/hadoop-2.7.7/tmp/*

[xiaokang@hadoop04 ~]$ rm -rf /opt/software/hadoop-2.7.7/dfs/journalnode_data/*

[xiaokang@hadoop04 ~]$ rm -rf /opt/software/hadoop-2.7.7/dfs/edits/*

[xiaokang@hadoop04 ~]$ rm -rf /opt/software/hadoop-2.7.7/dfs/datanode_data/*

[xiaokang@hadoop04 ~]$ rm -rf /opt/software/hadoop-2.7.7/dfs/namenode_data/*

将hadoop04的公钥发给hadoop01和02

[xiaokang@hadoop04 ~]$ ssh-copy-id hadoop01

[xiaokang@hadoop04 ~]$ ssh-copy-id hadoop02

将hadoop01和02的公钥发给hadoop04

[xiaokang@hadoop01 ~]$ ssh-copy-id hadoop04

[xiaokang@hadoop02 ~]$ ssh-copy-id hadoop04

3.2 创建dn-include.conf文件

在NameNode的hadoop安装目录的etc/hadoop目录下创建dn-include.conf文件(注意文件内一行一个主机名),内容如下

[xiaokang@hadoop01 ~]$ touch /opt/software/hadoop-2.7.7/etc/hadoop/dn-include.conf

hadoop01

hadoop02

hadoop03

hadoop04

3.3 修改hdfs-site.xml和slaves

hdfs-site.xml配置文件中增加dfs.hosts属性

<property>

<name>dfs.hosts</name>

<value>/opt/software/hadoop-2.7.7/etc/hadoop/dn-include.conf</value>

</property>

slaves

hadoop01

hadoop02

hadoop03

hadoop04

3.4 分发文件

[xiaokang@hadoop01 ~]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/dn-include.conf

[xiaokang@hadoop01 ~]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/hdfs-site.xml

[xiaokang@hadoop01 ~]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/slaves

3.5 启动DN和NM

[xiaokang@hadoop04 ~]$ hadoop-daemon.sh start datanode

[xiaokang@hadoop04 ~]$ yarn-daemon.sh start nodemanager

3.6 主NN和主RM进行刷新

[xiaokang@hadoop01 ~]$ hdfs dfsadmin -refreshNodes

[xiaokang@hadoop03 ~]$ yarn rmdmin -refreshNodes

3.7 开启数据均衡

[xiaokang@hadoop04 ~]$ start-balancer.sh -threshold 10

# 参数值10代表集群中各个节点的磁盘空间利用率相差不超过10%,可根据实际情况进行调整,值越低各节点越平衡,但这样消耗时间也更长

数据分散差不多后,这里一定要记得停止数据均衡,不然会一直检测各个节点的磁盘空间利用率,很耗费资源

[xiaokang@hadoop04 ~]$ stop-balancer.sh

四、退役旧节点(动态下线节点)

退役之前咱们先上传一个74M的jar包,hadoop04退役之后数据会转移到其它DN之上

[xiaokang@hadoop04 ~]$ hdfs dfs -put event_ingestion-0.0.1.jar /

4.1 创建dn-exclude.conf文件

在NameNode的hadoop安装目录的etc/hadoop目录下创建dn-exclude.conf文件(注意文件内一行一个主机名),将需要退役的节点的主机名加入其中

hadoop04

4.2 修改hdfs-site.xml

hdfs-site.xml配置文件中增加dfs.hosts.exclude属性

<property>

<name>dfs.hosts.exclude</name>

<value>/opt/software/hadoop-2.7.7/etc/hadoop/dn-exclude.conf</value>

</property>

4.3 分发文件

[xiaokang@hadoop01 ~]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/dn-exclude.conf

[xiaokang@hadoop01 ~]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/hdfs-site.xml

4.4 主NN和主RM进行刷新

[xiaokang@hadoop01 ~]$ hdfs dfsadmin -refreshNodes

[xiaokang@hadoop03 ~]$ yarn rmdmin -refreshNodes

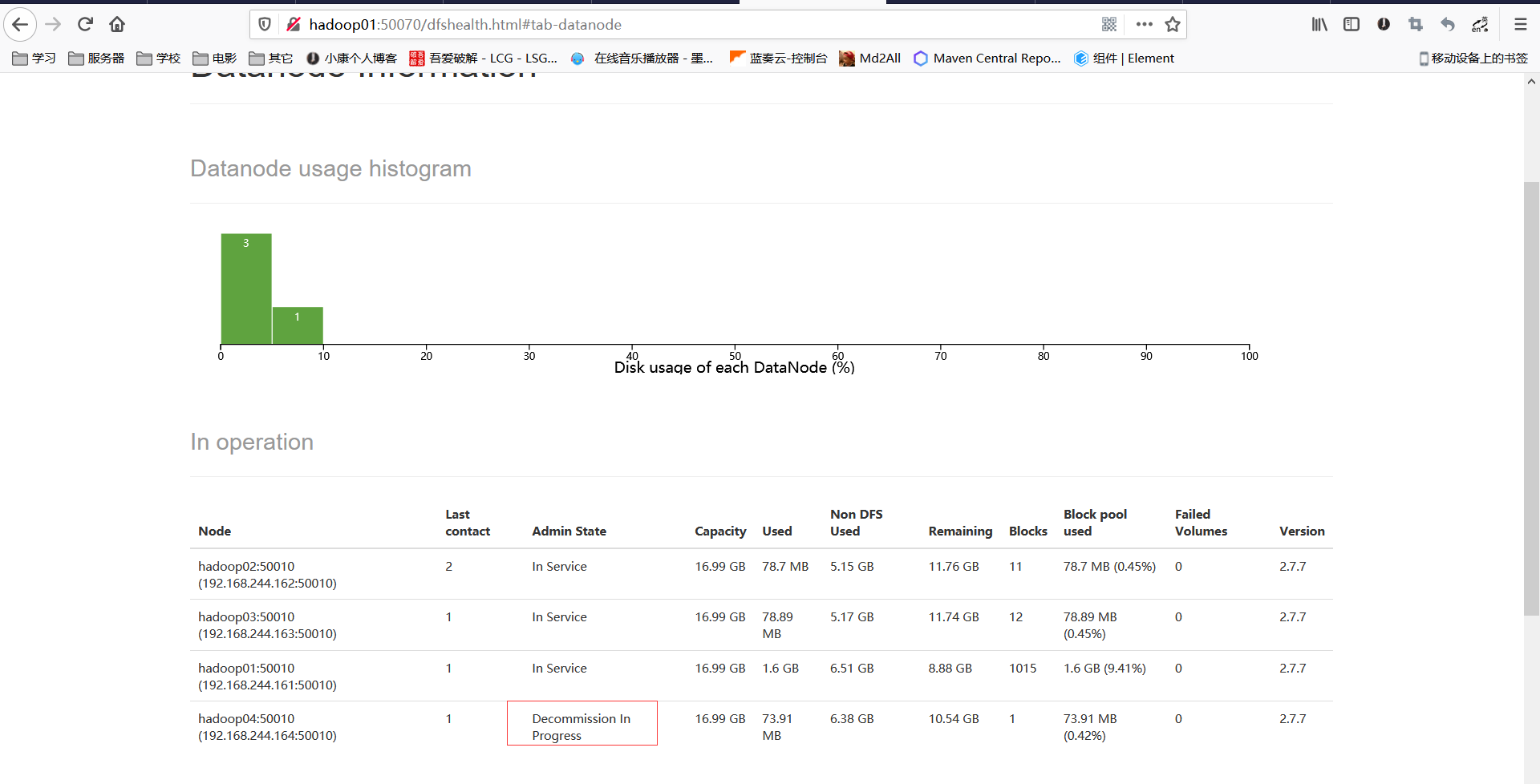

通过WebUI可以看到退役节点的状态为Decommission In Progress(退役中),说明数据节点正在复制块到其它节点。

4.5 关闭DN和RM

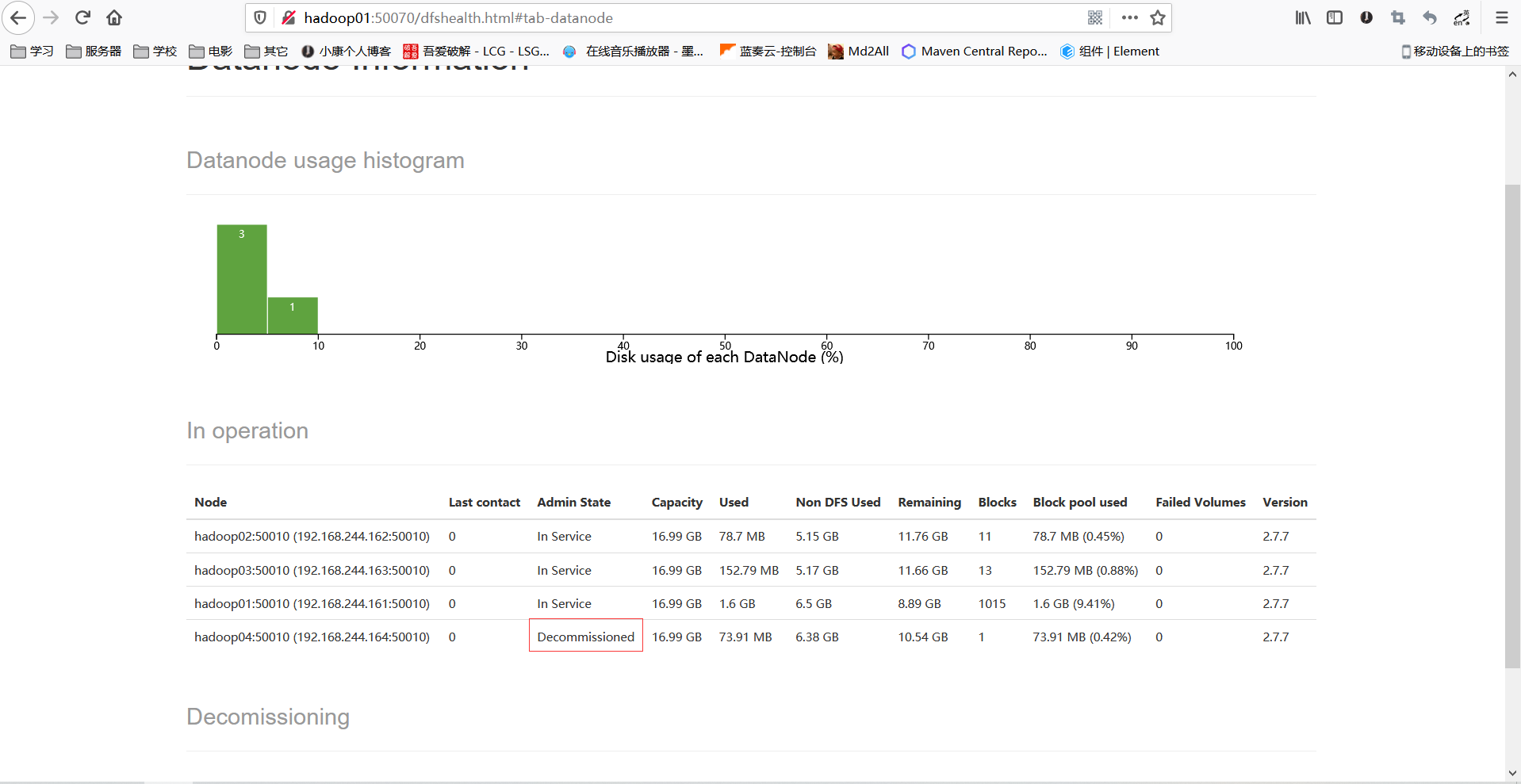

等待退役节点状态为 Decommissioned (所有块已经复制完成),执行下面操作

[xiaokang@hadoop04 ~]$ hadoop-daemon.sh stop datanode

[xiaokang@hadoop04 ~]$ yarn-daemon.sh stop nodemanager

4.6 从slaves和dn-include.conf中剔除退役节点

# 1.将slaves和dn-include.conf中将hadoop04去除

# 2.修改好后进行分发

[xiaokang@hadoop01 hadoop]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/slaves

[xiaokang@hadoop01 hadoop]$ distribution-dn.sh /opt/software/hadoop-2.7.7/etc/hadoop/dn-include.conf

# 3.主NN和主RM进行刷新

[xiaokang@hadoop01 ~]$ hdfs dfsadmin -refreshNodes

[xiaokang@hadoop03 ~]$ yarn rmdmin -refreshNodes

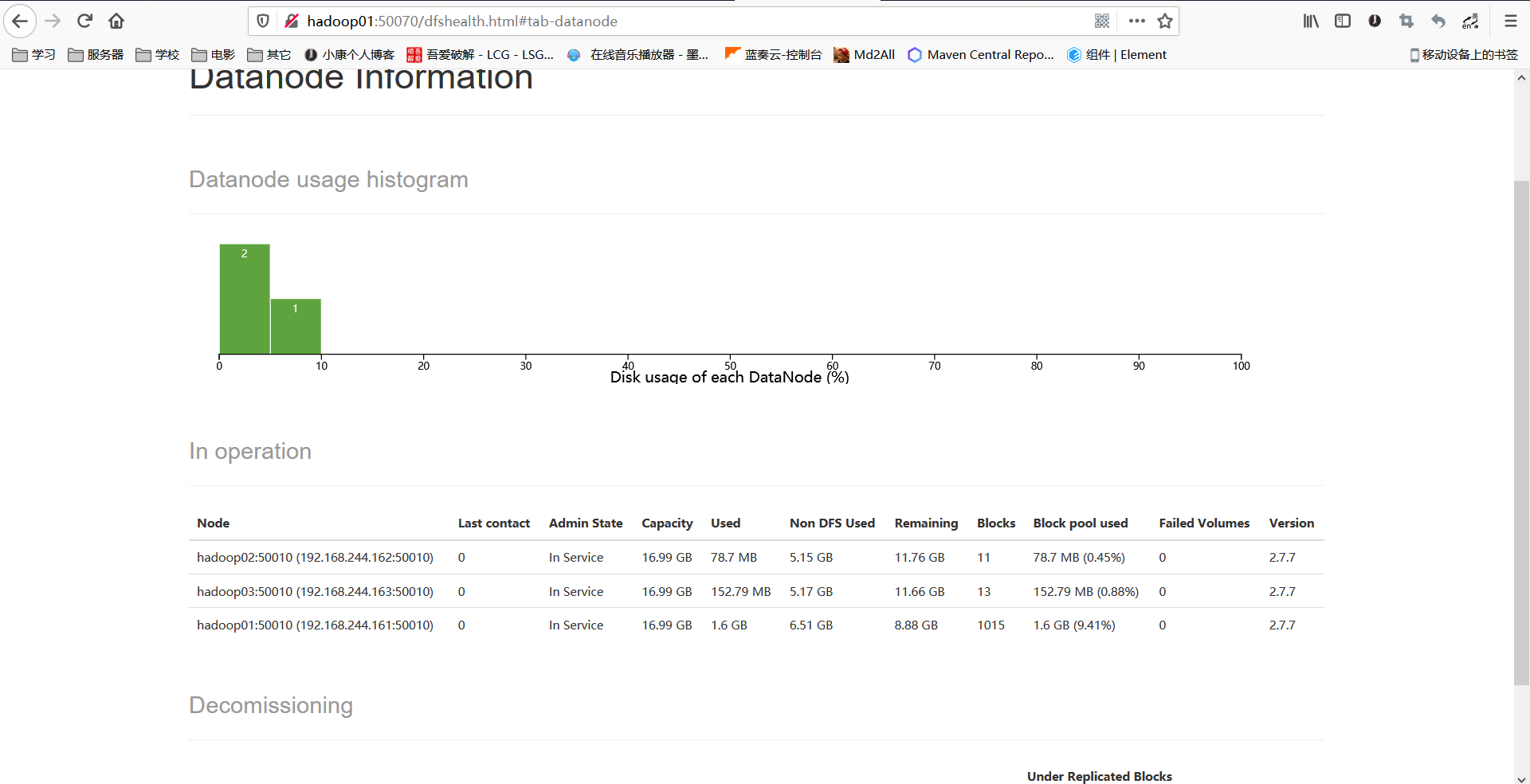

最后大功告成,如下图所示

版权声明:本文为weixin_42341823原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。