一、完整的模型训练套路

以CIFAR10为例

1、创建数据集

# 准备数据集

import torchvision

# 训练数据集

train_data = torchvision.datasets.CIFAR10("../dataset",train=True,

transform=torchvision.transforms.ToTensor(),download=True)

# 测试数据集

test_data = torchvision.datasets.CIFAR10("../dataset",train=False,

transform=torchvision.transforms.ToTensor(),download=True)可以查看数据集长度

# length 长度

train_data_size = len(train_data)

test_data_size = len(test_data)

print("训练数据集的长度为:{}".format(train_data_size)) #训练数据集的长度为:50000

print("测试数据集的长度为:{}".format(test_data_size)) #测试数据集的长度为:100002、加载数据集(DataLoader)

# 利用DataLoader来加载数据集 train_dataloader = DataLoader(train_data,batch_size=64) test_dataloader = DataLoader(test_data,batch_size=64)

3、搭建神经网络

最好新建一个model.py

# 搭建神经网络 class ExamModuel(nn.Module): def __init__(self): super(ExamModuel, self).__init__() self.model = nn.Sequential( nn.Conv2d(3, 32, 5, 1, 2), nn.MaxPool2d(2), nn.Conv2d(32, 32, 5, 1, 2), nn.MaxPool2d(2), nn.Conv2d(32, 64, 5, 1, 2), nn.MaxPool2d(2), nn.Flatten(), nn.Linear(64 * 4 * 4, 64), nn.Linear(64, 10) ) def forward(self, x): x = self.model(x) return x

测试网络模型的正确性(可省略)

if __name__ == '__main__': # 创造网络模型 ex_model = ExamModuel() input = torch.ones((64,3,32,32)) output = ex_model(input) print(output.shape)

4、创建网络模型

# 创建网络模型 ex_model = ExamModule()

5、损失函数

# 损失函数 loss_fn = nn.CrossEntropyLoss()

6、优化器

# 优化器 # SGD:随机梯度下降 # learning_rate = 0.01 # 学习率 learning_rate = 1e-2 # 学习率 optimizer = torch.optim.SGD(ex_model.parameters(),lr=learning_rate)

7、设置训练网络的一些参数

# 设置训练网络的一些参数 total_train_step = 0 # 记录训练的次数 total_test_step = 0 # 记录测试的次数 epoch = 10 # 训练的轮数

8、开始训练、测试训练效果

循环定义训练轮数epoch

for i in range(epoch):

print("-------------第{}轮训练开始-------------".format(i+1))训练

#训练步骤开始

ex_model.train() #对一些特殊的层有作用

for data in train_dataloader:

imgs,targets = data

outputs = ex_model(imgs)

loss = loss_fn(outputs,targets) # 和真实值targets相比,损失值为loss

# 优化器优化模型

optimizer.zero_grad() # 梯度清零

loss.backward() # 反向传播,得到每一个参数节点的梯度

optimizer.step() # 进行优化

total_train_step += 1

if total_train_step % 100 == 0:

print("训练次数:{},Loss:{}".format(total_train_step,loss.item()))每一轮都可以测试训练效果

# 测试开始

my_nn.eval()

total_test_loss = 0

train_accuracy = 0

with torch.no_grad():

for data in test_dataloader:

img,target = data

output = my_nn(img)

accuracy = (target == output.argmax(1)).sum()

train_accuracy += accuracy

loss = loss_fn(output,target)

total_test_loss += loss

print("整体测试集上的Loss:{},ACC:{}".format(total_test_loss.item(),train_accuracy.item()/test_data_size))9、添加tensorboard

print("整体测试集上的loss:{}".format(total_test_loss))

print("整体测试集上的accracy:{}".format(total_accuracy/test_data_size))

writer.add_scalar("test_loss",total_test_loss,total_test_step)

writer.add_scalar("test_accuracy",total_accuracy/test_data_size,total_test_step)

total_test_step += 1 torch.save(ex_model,"ex_model_{}.pth".format(i))

print("模型已保存...")

writer.close()①让模型在GPU上训练(一)

只需添加一些代码(ex_model、loss_fn、imgs、targets部分)

模型、损失函数、数据 需要添加.cuda()

模型-->cuda

ex_model = ExamModule()

if torch.cuda.is_available():

ex_model = ex_model.cuda() #网络模型可以转移到cuda上# 损失函数

loss_fn = nn.CrossEntropyLoss()

if torch.cuda.is_available():

loss_fn = loss_fn.cuda() #损失函数-->cudaimgs,targets = data

if torch.cuda.is_available():

imgs = imgs.cuda()

targets = targets.cuda()

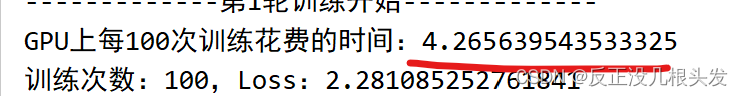

# 注意:训练数据和测试数据都要添加该段代码在GPU上速度快了很多

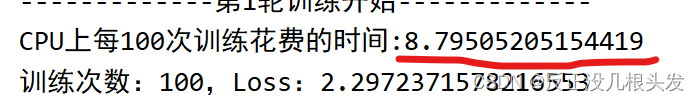

CPU上:

GPU上:

②让模型在GPU上训练(二)

device = torch.device("cpu")

device = torch.device("cuda") 或者(等同于) device = torch.device("cuda:0")

device = torch.device("cuda:1")

模型/损失函数/imgs/targets.to(device)

# 有GPU则在GPU上训练,无GPU则在CPU上训练

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 定义训练的设备

device = torch.device("cuda")

# device = torch.device("cpu")模型-->设备 ex_model = ExamModule() ex_model = ex_model.to(device) #把网络转移到设备上 # ex_model.to(device) #这样写即可

# 损失函数-->设备 loss_fn = nn.CrossEntropyLoss() loss_fn = loss_fn.to(device) #把损失函数转移到设备上 # loss_fn.to(device) #这样写即可

imgs,targets = data imgs = imgs.to(device) targets = targets.to(device)

二、完整的模型验证(测试、demo)套路

利用已经训练好的模型,然后给它提供输入

测试OK的模型,就可以对外应用了

import torch import torchvision from PIL import Image from torch import nn

输入

device = torch.device("cuda")

img_path = "../imgs/003.png"

img = Image.open(img_path)

img = img.convert("RGB") #png格式是四个通道,除了RGB三通道外,还有一个透明度通道

transfrom = torchvision.transforms.Compose([torchvision.transforms.Resize((32,32)),

torchvision.transforms.ToTensor()])

img = transfrom(img)

img = img.to(device)

# print(img.shape)补充知识点:png格式是四个通道,除了RGB三通道外,还有一个透明度通道

搭建模型

class ExamModule(nn.Module):

def __init__(self):

super(ExamModule, self).__init__()

self.model = nn.Sequential(

nn.Conv2d(3, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 32, 5, 1, 2),

nn.MaxPool2d(2),

nn.Conv2d(32, 64, 5, 1, 2),

nn.MaxPool2d(2),

nn.Flatten(),

nn.Linear(64 * 4 * 4, 64),

nn.Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

ex_model = torch.load("ex_model_29.pth")

# ex_model = torch.load("ex_model_29.pth",map_location=torch.device("cpu"))

# 在GPU中训练的模型,要将其映射到cpu上,使用map_location=torch.device("cpu")

print(ex_model)测试输出

img = torch.reshape(img,(1,3,32,32)) # print(img.shape) ex_model.eval() with torch.no_grad(): output = ex_model(img) print(output) print(output.argmax(1))

版权声明:本文为weixin_44334635原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。