极限学习机

单隐藏层反馈神经网络具有两个比较突出的能力:

(1)可以直接从训练样本中拟 合 出 复 杂 的 映 射 函 数f :x ^ t

(2)可以为大量难以用传统分类参数技术处理的自然或者人工现象提供模型。但是单隐藏层反馈神经网络缺少比较快速的学习方 法 。误差反向传播算法每次迭代需要更新n x(L+ 1) +L x (m+ 1 )个 值 ,所花费的时间远远低于所容忍的时间。经常可以看到为训练一个单隐藏层反馈神经网络花费了数小时,数天或者更多的时间。

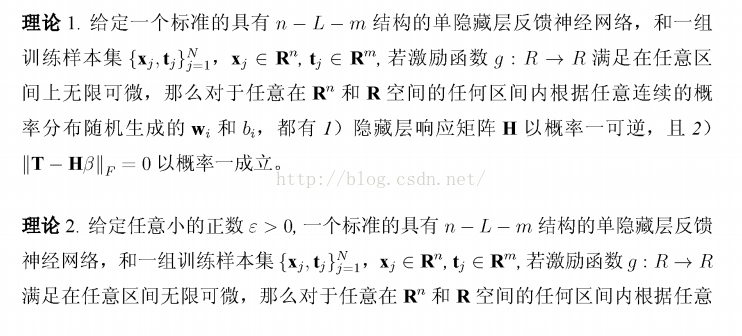

基于以上原因,黄广斌教授对单隐藏层反馈神经网络进行了深入的研究,提

出并证明了两个大胆的理论:

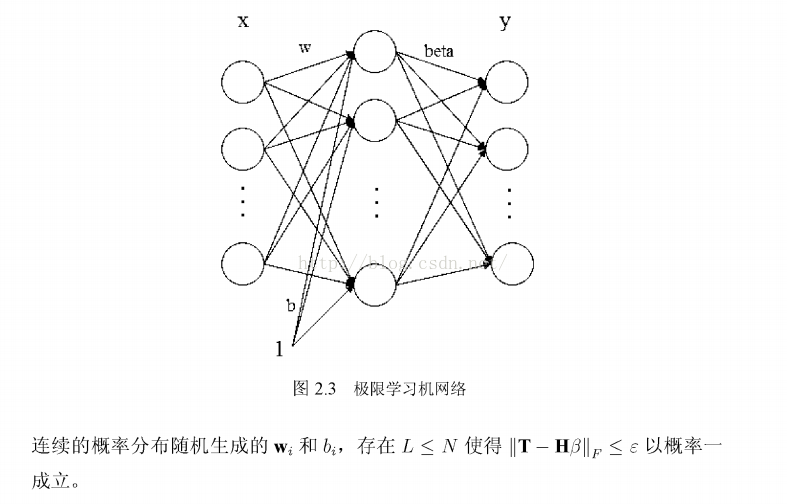

从以上两条理论我们可以看出,只要激励函数g:R ^ R满足在任意区间上无限可微,那么wt和bt可以从R的n维和R空间的任何区间内根据任意连续的概率分布随机生成 ,也就是说单隐藏层前馈神经网络无需再对wt和bt进行调整;又因为式子||TH*beta||_f=0以概率一成立,我们发现输出层的偏置也不再需要。那么一个新型的但隐藏层反馈神经网络如图2 .3表示 。

对比图2.2,缺少了输出层偏 置bs,而输入权重w和隐藏层偏置bi随机产生不需要调整,那么整个网络仅仅剩下输出权重beta一项没有确定。因此极限学习机

应运而生。令神经网络的输出等于样本标签,如式(2-11)表示

n x(L+1) +Lx(m+1)个值,且反向传播算法为了保证系统的稳定性通常选取较小的学习率,使得学习时间大大加长。因此极限学习机在这一方法优势非常巨大,在实验中 ,极限学习机往往在数秒内就完成了运算。而一些比较经典的算法在训练一个单隐藏层神经网络的时候即使是很小的应用也要花费大量的时间,似乎这些算法存在着一个无法逾越的虚拟速度壁垒。

(2)在大多数的应用中,极限学习机的泛化能力大于类似于误差反向传播算法这类的基于梯度的算法。

( 3 )传统的基于梯度的算法需要面对诸如局部最小,合适的学习率、过拟合等问题 ,而极限学习机一步到位直接构建起单隐藏层反馈神经网络,避免了这些难以处理的棘手问题。

极限学习机由于这些优势,广大研究人员对极限学习机报以极大的兴趣,使得这几年极限学习机的理论发展很快,应用也在不断地拓宽。

小结

本文主要对极限学习机的本质原理和来龙去脉作了详尽的介绍,阐明极限学习机所具有的各种各样优势吸引广大学者进行研究。然而极限学习机也有自己的劣势,接下来我们将对极限学习机的劣势进行分析和研究并加以改进。

MATLAB程序:可以到GB.Huang老师个人网站上下载

1、一个回归的例子:(数据、程序放在同一文件夹)

主函数main.m(数据在这sinc_train,sinc_test)

clear all;

close all;

clc;

[TrainingTime, TestingTime, TrainingAccuracy, TestingAccuracy] = ELM('sinc_train', 'sinc_test', 0, 20, 'sig')ELM.m

function [TrainingTime, TestingTime, TrainingAccuracy, TestingAccuracy] = elm(TrainingData_File, TestingData_File, Elm_Type, NumberofHiddenNeurons, ActivationFunction)

% Usage: elm(TrainingData_File, TestingData_File, Elm_Type, NumberofHiddenNeurons, ActivationFunction)

% OR: [TrainingTime, TestingTime, TrainingAccuracy, TestingAccuracy] = elm(TrainingData_File, TestingData_File, Elm_Type, NumberofHiddenNeurons, ActivationFunction)

%

% Input:

% TrainingData_File - Filename of training data set

% TestingData_File - Filename of testing data set

% Elm_Type - 0 for regression; 1 for (both binary and multi-classes) classification

% NumberofHiddenNeurons - Number of hidden neurons assigned to the ELM

% ActivationFunction - Type of activation function:

% 'sig' for Sigmoidal function

% 'sin' for Sine function

% 'hardlim' for Hardlim function

% 'tribas' for Triangular basis function

% 'radbas' for Radial basis function (for additive type of SLFNs instead of RBF type of SLFNs)

%

% Output:

% TrainingTime - Time (seconds) spent on training ELM

% TestingTime - Time (seconds) spent on predicting ALL testing data

% TrainingAccuracy - Training accuracy:

% RMSE for regression or correct classification rate for classification

% TestingAccuracy - Testing accuracy:

% RMSE for regression or correct classification rate for classification

%

% MULTI-CLASSE CLASSIFICATION: NUMBER OF OUTPUT NEURONS WILL BE AUTOMATICALLY SET EQUAL TO NUMBER OF CLASSES

% FOR EXAMPLE, if there are 7 classes in all, there will have 7 output

% neurons; neuron 5 has the highest output means input belongs to 5-th class

%

% Sample1 regression: [TrainingTime, TestingTime, TrainingAccuracy, TestingAccuracy] = elm('sinc_train', 'sinc_test', 0, 20, 'sig')

% Sample2 classification: elm('diabetes_train', 'diabetes_test', 1, 20, 'sig')

%

%%%% Authors: MR QIN-YU ZHU AND DR GUANG-BIN HUANG

%%%% NANYANG TECHNOLOGICAL UNIVERSITY, SINGAPORE

%%%% EMAIL: EGBHUANG@NTU.EDU.SG; GBHUANG@IEEE.ORG

%%%% WEBSITE: http://www.ntu.edu.sg/eee/icis/cv/egbhuang.htm

%%%% DATE: APRIL 2004

%%%%%%%%%%% Macro definition

REGRESSION=0;

CLASSIFIER=1;

%%%%%%%%%%% Load training dataset

train_data=load(TrainingData_File);

T=train_data(:,1)';

P=train_data(:,2:size(train_data,2))';

clear train_data; % Release raw training data array

%%%%%%%%%%% Load testing dataset

test_data=load(TestingData_File);

TV.T=test_data(:,1)';

TV.P=test_data(:,2:size(test_data,2))';

clear test_data; % Release raw testing data array

NumberofTrainingData=size(P,2);

NumberofTestingData=size(TV.P,2);

NumberofInputNeurons=size(P,1);

if Elm_Type~=REGRESSION

%%%%%%%%%%%% Preprocessing the data of classification

sorted_target=sort(cat(2,T,TV.T),2);

label=zeros(1,1); % Find and save in 'label' class label from training and testing data sets

label(1,1)=sorted_target(1,1);

j=1;

for i = 2:(NumberofTrainingData+NumberofTestingData)

if sorted_target(1,i) ~= label(1,j)

j=j+1;

label(1,j) = sorted_target(1,i);

end

end

number_class=j;

NumberofOutputNeurons=number_class;

%%%%%%%%%% Processing the targets of training

temp_T=zeros(NumberofOutputNeurons, NumberofTrainingData);

for i = 1:NumberofTrainingData

for j = 1:number_class

if label(1,j) == T(1,i)

break;

end

end

temp_T(j,i)=1;

end

T=temp_T*2-1;

%%%%%%%%%% Processing the targets of testing

temp_TV_T=zeros(NumberofOutputNeurons, NumberofTestingData);

for i = 1:NumberofTestingData

for j = 1:number_class

if label(1,j) == TV.T(1,i)

break;

end

end

temp_TV_T(j,i)=1;

end

TV.T=temp_TV_T*2-1;

end % end if of Elm_Type

%%%%%%%%%%% Calculate weights & biases

start_time_train=cputime;

%%%%%%%%%%% Random generate input weights InputWeight (w_i) and biases BiasofHiddenNeurons (b_i) of hidden neurons

InputWeight=rand(NumberofHiddenNeurons,NumberofInputNeurons)*2-1;

BiasofHiddenNeurons=rand(NumberofHiddenNeurons,1);

tempH=InputWeight*P;

clear P; % Release input of training data

ind=ones(1,NumberofTrainingData);

BiasMatrix=BiasofHiddenNeurons(:,ind); % Extend the bias matrix BiasofHiddenNeurons to match the demention of H

tempH=tempH+BiasMatrix;

%%%%%%%%%%% Calculate hidden neuron output matrix H

switch lower(ActivationFunction)

case {'sig','sigmoid'}

%%%%%%%% Sigmoid

H = 1 ./ (1 + exp(-tempH));

case {'sin','sine'}

%%%%%%%% Sine

H = sin(tempH);

case {'hardlim'}

%%%%%%%% Hard Limit

H = double(hardlim(tempH));

case {'tribas'}

%%%%%%%% Triangular basis function

H = tribas(tempH);

case {'radbas'}

%%%%%%%% Radial basis function

H = radbas(tempH);

%%%%%%%% More activation functions can be added here

end

clear tempH; % Release the temparary array for calculation of hidden neuron output matrix H

%%%%%%%%%%% Calculate output weights OutputWeight (beta_i)

OutputWeight=pinv(H') * T'; % implementation without regularization factor //refer to 2006 Neurocomputing paper

%OutputWeight=inv(eye(size(H,1))/C+H * H') * H * T'; % faster method 1 //refer to 2012 IEEE TSMC-B paper

%implementation; one can set regularizaiton factor C properly in classification applications

%OutputWeight=(eye(size(H,1))/C+H * H') \ H * T'; % faster method 2 //refer to 2012 IEEE TSMC-B paper

%implementation; one can set regularizaiton factor C properly in classification applications

%If you use faster methods or kernel method, PLEASE CITE in your paper properly:

%Guang-Bin Huang, Hongming Zhou, Xiaojian Ding, and Rui Zhang, "Extreme Learning Machine for Regression and Multi-Class Classification," submitted to IEEE Transactions on Pattern Analysis and Machine Intelligence, October 2010.

end_time_train=cputime;

TrainingTime=end_time_train-start_time_train % Calculate CPU time (seconds) spent for training ELM

%%%%%%%%%%% Calculate the training accuracy

Y=(H' * OutputWeight)'; % Y: the actual output of the training data

if Elm_Type == REGRESSION

TrainingAccuracy=sqrt(mse(T - Y)) % Calculate training accuracy (RMSE) for regression case

end

clear H;

%%%%%%%%%%% Calculate the output of testing input

start_time_test=cputime;

tempH_test=InputWeight*TV.P;

clear TV.P; % Release input of testing data

ind=ones(1,NumberofTestingData);

BiasMatrix=BiasofHiddenNeurons(:,ind); % Extend the bias matrix BiasofHiddenNeurons to match the demention of H

tempH_test=tempH_test + BiasMatrix;

switch lower(ActivationFunction)

case {'sig','sigmoid'}

%%%%%%%% Sigmoid

H_test = 1 ./ (1 + exp(-tempH_test));

case {'sin','sine'}

%%%%%%%% Sine

H_test = sin(tempH_test);

case {'hardlim'}

%%%%%%%% Hard Limit

H_test = hardlim(tempH_test);

case {'tribas'}

%%%%%%%% Triangular basis function

H_test = tribas(tempH_test);

case {'radbas'}

%%%%%%%% Radial basis function

H_test = radbas(tempH_test);

%%%%%%%% More activation functions can be added here

end

TY=(H_test' * OutputWeight)'; % TY: the actual output of the testing data

end_time_test=cputime;

TestingTime=end_time_test-start_time_test % Calculate CPU time (seconds) spent by ELM predicting the whole testing data

if Elm_Type == REGRESSION

TestingAccuracy=sqrt(mse(TV.T - TY)); % Calculate testing accuracy (RMSE) for regression case

end

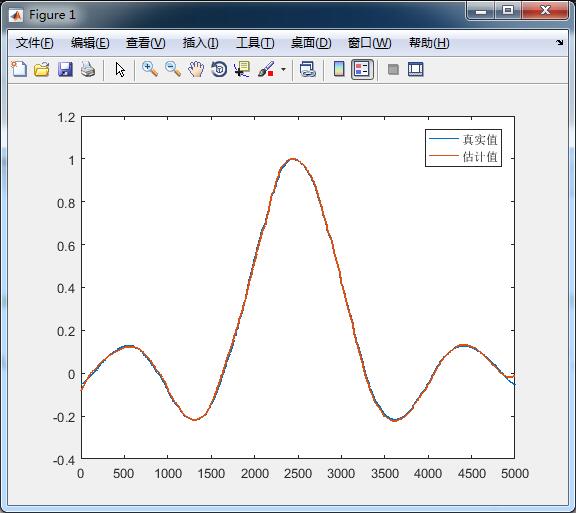

if Elm_Type == REGRESSION

figure

plot(TV.T,'LineWidth',1.2);hold on ;

plot(TY,'LineWidth',1.2)

legend('真实值','估计值')

end

if Elm_Type == CLASSIFIER

%%%%%%%%%% Calculate training & testing classification accuracy

MissClassificationRate_Training=0;

MissClassificationRate_Testing=0;

for i = 1 : size(T, 2)

[x, label_index_expected]=max(T(:,i));

[x, label_index_actual]=max(Y(:,i));

if label_index_actual~=label_index_expected

MissClassificationRate_Training=MissClassificationRate_Training+1;

end

end

TrainingAccuracy=1-MissClassificationRate_Training/size(T,2)

for i = 1 : size(TV.T, 2)

[x, label_index_expected]=max(TV.T(:,i));

[x, label_index_actual]=max(TY(:,i));

if label_index_actual~=label_index_expected

MissClassificationRate_Testing=MissClassificationRate_Testing+1;

end

end

TestingAccuracy=1-MissClassificationRate_Testing/size(TV.T,2)

end测试结果:

TrainingTime =0.1248

TestingTime = 0

TrainingAccuracy = 0.1158

TestingAccuracy =0.0074

2、一个分类的例子

主函数main.m(数据在这diabetes_train,diabetes_test)

clear all;

close all;

clc;

[TrainingTime, TestingTime, TrainingAccuracy, TestingAccuracy] = ELM('diabetes_train', 'diabetes_test', 1, 20, 'sig')ELM.m还是上面的那个

仿真结果:

TrainingTime =0.0624

TestingTime = 0

TrainingAccuracy = 0.7674

TestingAccuracy =0.7865

欢迎评论,点赞!