centos7搭建k8s集群

环境:

| ip | 主机名 | 角色 |

|---|---|---|

| 192.168.25.133 | k8s01 | master |

| 192.168.25.134 | k8s02 | slave |

| 192.168.25.135 | k8s03 | slave |

安装必要的软件

yum install -y net-tools.x86_64 wget yum-utils

配置hosts

cat >> /etc/hosts << EOF

192.168.25.133 k8s01

192.168.25.134 k8s02

192.168.25.135 k8s03

EOF

关闭防火墙

systemctl disable firewalld

systemctl stop firewalld

禁用selinux,让容器可以顺利地读取主机文件系统

setenforce 0

sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config

关闭swap,swap是操作系统在内存吃紧的情况申请的虚拟内存,按照Kubernetes官网的说法,Swap会对Kubernetes的性能造成影响,不推荐使用

swapoff -a #临时关闭

vi /etc/fstab #永久关闭,删除或者注释掉swap配置哪一行

临时关闭 和 永久关闭 择一执行即可

如果不禁用,则需修改 vi /etc/sysconfig/kubelet :

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

将桥接的IPv4流量传递到iptables的链:

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

查看信息

sysctl --system

在三台机器分别执行,生成各自的ssh的公私钥对

ssh-keygen -t rsa -f /root/.ssh/id_rsa -P ""

在三台机器分别执行配置其他两台的机器的免密访问操作

ssh-copy-id k8s01

ssh-copy-id k8s02

ssh-copy-id k8s03

下载docker.repo包至 /etc/yum.repos.d/目录

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

新建kubernetes仓库文件

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

EOF

重启同步系统时间(保证多台服务的时间一致)

systemctl restart chronyd

配置docker仓库

yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

安装docker,启动docker,设置开机启动

yum install docker-ce

systemctl enable docker && systemctl start docker

配置镜像加速

cat >> /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://3******.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

重新加载并重启docker

systemctl daemon-reload && systemctl restart docker

安装kubelet kubeadm kubectl,启动kubelet并设置开机自启

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet && systemctl start kubelet

使用kubeadm version可以查看kubernetes的最新版本号是多少

在master节点上执行初始化,初始化会比较慢,耐心点等待,以下命令是直接按装最新版本的kubernetes,想安装指定版本的kubernetes需要加上参数kubernetes-version即可,例如:–kubernetes-version=“v1.17.4”

kubeadm init --pod-network-cidr=10.244.0.0/16 --service-cidr=10.1.0.0/16 --apiserver-advertise-address=192.168.25.133 --image-repository registry.aliyuncs.com/google_containers

master节点初始化完成会生成一个token值,需要把这个token值保存起来,以免弄丢,slave节点加入集群是需要使用到这个token的。

在两台slave节点上执行加入k8s集群

kubeadm join 192.168.25.133:6443 --token 8000y1.xh9227rgmyfy0ljx

slave节点成功加入集群后会显示This node has joined the cluster提示信息

在master上执行kubectl get nodes会报错:The connection to the server localhost:8080 was refused - did you specify the right host or port?

解决方法:将/etc/kubernetes/admin.conf配置进环境变量

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> /etc/profile

重新加载环境变量

source /etc/profile

再次执行kubectl get nodes命令成功

将admin.conf复制到各个节点后再配置好环境变量也可以在node节点上执行kubectl命令查看

scp /etc/kubernetes/admin.conf root@k8s03:/etc/kubernetes/

scp /etc/kubernetes/admin.conf root@k8s02:/etc/kubernetes/

在k8s02和k8s03上分别执行

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> /etc/profile

source /etc/profile

master节点安装网络插件,这里我选择安装flannel

官方提供的安装flannel方式,需要科学上网,下面会提供kube-flannel.ym的具体内容

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

flannel的版本要和kubernetes版本保持一致

笔者安装的版本是kubernetes版本是v1.22.4,所以安装的flannel版本是v0.15.1

kube-flannel.yml v0.15.1

cat <<EOF > kube-flannel.yml

---

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni-plugin

image: rancher/mirrored-flannelcni-flannel-cni-plugin:v1.0.0

command:

- cp

args:

- -f

- /flannel

- /opt/cni/bin/flannel

volumeMounts:

- name: cni-plugin

mountPath: /opt/cni/bin

- name: install-cni

image: quay.io/coreos/flannel:v0.15.1

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.15.1

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni-plugin

hostPath:

path: /opt/cni/bin

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg

EOF

生成 kube-flannel.yml文件后

docker pull quay.io/coreos/flannel:v0.15.1

kubectl apply -f kube-flannel.yml

[root@k8s01 ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-7f6cbbb7b8-fccvh 1/1 Running 0 4h17m

coredns-7f6cbbb7b8-nb8b9 1/1 Running 0 4h17m

etcd-k8s01 1/1 Running 0 4h17m

kube-apiserver-k8s01 1/1 Running 0 4h17m

kube-controller-manager-k8s01 1/1 Running 0 4h17m

kube-flannel-ds-2gfsw 1/1 Running 0 127m

kube-flannel-ds-9847s 1/1 Running 0 127m

kube-flannel-ds-xxgmn 1/1 Running 0 127m

kube-proxy-46gvj 1/1 Running 0 4h14m

kube-proxy-5cgj4 1/1 Running 0 4h17m

kube-proxy-wd67q 1/1 Running 0 4h14m

kube-scheduler-k8s01 1/1 Running 0 4h17m

[root@k8s01 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-apiserver v1.22.4 8a5cc299272d 9 days ago 128MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.22.4 721ba97f54a6 9 days ago 52.7MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.22.4 0ce02f92d3e4 9 days ago 122MB

registry.aliyuncs.com/google_containers/kube-proxy v1.22.4 edeff87e4802 9 days ago 104MB

quay.io/coreos/flannel v0.15.1 e6ea68648f0c 2 weeks ago 69.5MB

rancher/mirrored-flannelcni-flannel-cni-plugin v1.0.0 cd5235cd7dc2 4 weeks ago 9.03MB

registry.aliyuncs.com/google_containers/etcd 3.5.0-0 004811815584 5 months ago 295MB

registry.aliyuncs.com/google_containers/coredns v1.8.4 8d147537fb7d 6 months ago 47.6MB

registry.aliyuncs.com/google_containers/pause 3.5 ed210e3e4a5b 8 months ago 683kB

[root@k8s01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s01 Ready control-plane,master 4h19m v1.22.4

k8s02 Ready <none> 4h17m v1.22.4

k8s03 Ready <none> 4h17m v1.22.4

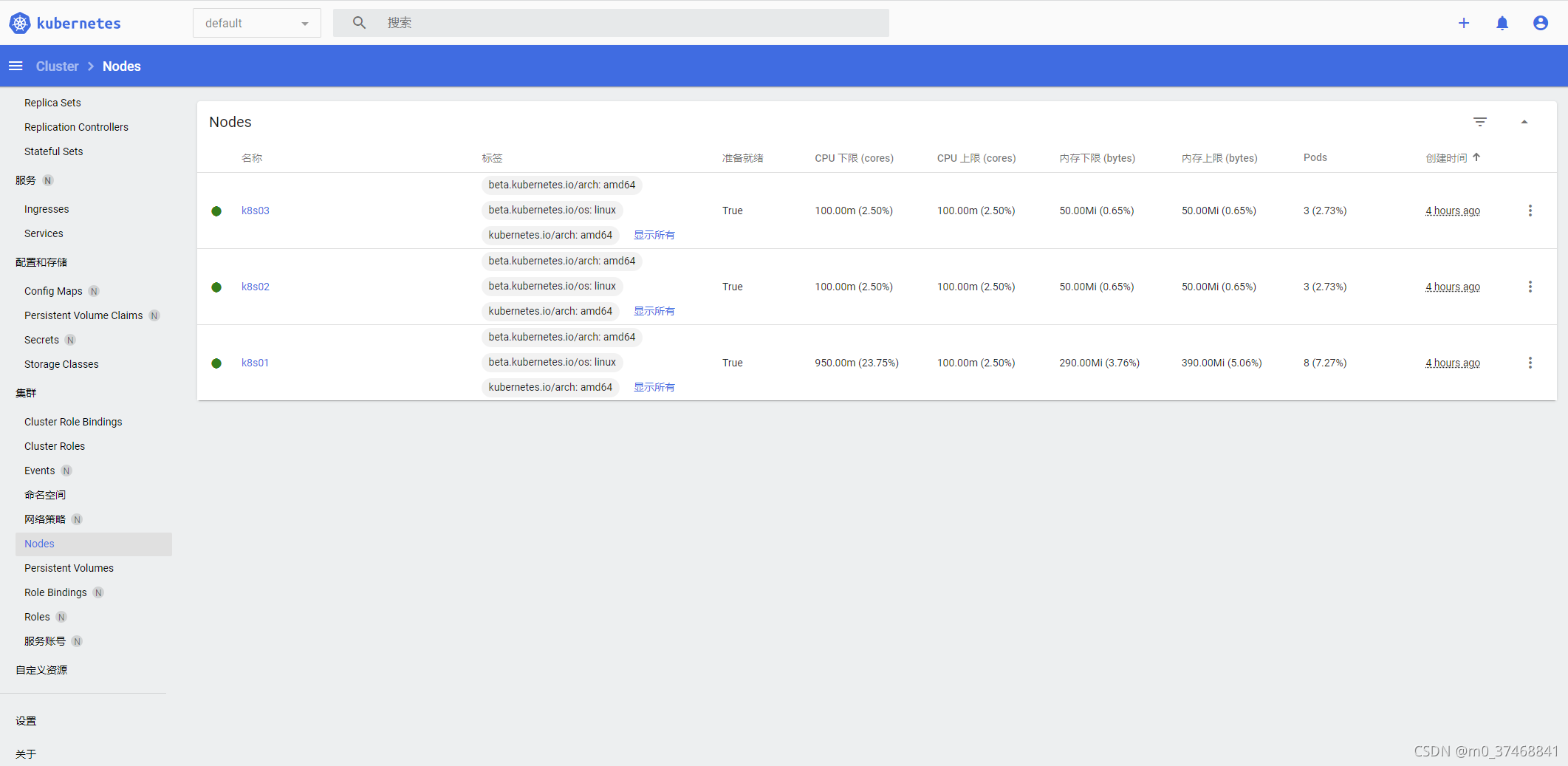

安装kubernetes-dashboard

下载

kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.4.0/aio/deploy/recommended.yaml

recommended.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

apiVersion: v1

kind: Namespace

metadata:

name: kubernetes-dashboard

---

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

ports:

- port: 443

targetPort: 8443

selector:

k8s-app: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kubernetes-dashboard

type: Opaque

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-csrf

namespace: kubernetes-dashboard

type: Opaque

data:

csrf: ""

---

apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-key-holder

namespace: kubernetes-dashboard

type: Opaque

---

kind: ConfigMap

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-settings

namespace: kubernetes-dashboard

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

rules:

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs", "kubernetes-dashboard-csrf"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster", "dashboard-metrics-scraper"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:", "dashboard-metrics-scraper", "http:dashboard-metrics-scraper"]

verbs: ["get"]

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

rules:

# Allow Metrics Scraper to get metrics from the Metrics server

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: kubernetes-dashboard

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kubernetes-dashboard

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kubernetes-dashboard

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: kubernetesui/dashboard:v2.4.0

imagePullPolicy: Always

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --namespace=kubernetes-dashboard

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

ports:

- port: 8000

targetPort: 8000

selector:

k8s-app: dashboard-metrics-scraper

---

kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: dashboard-metrics-scraper

name: dashboard-metrics-scraper

namespace: kubernetes-dashboard

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: dashboard-metrics-scraper

template:

metadata:

labels:

k8s-app: dashboard-metrics-scraper

spec:

securityContext:

seccompProfile:

type: RuntimeDefault

containers:

- name: dashboard-metrics-scraper

image: kubernetesui/metrics-scraper:v1.0.7

ports:

- containerPort: 8000

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 8000

initialDelaySeconds: 30

timeoutSeconds: 30

volumeMounts:

- mountPath: /tmp

name: tmp-volume

securityContext:

allowPrivilegeEscalation: false

readOnlyRootFilesystem: true

runAsUser: 1001

runAsGroup: 2001

serviceAccountName: kubernetes-dashboard

nodeSelector:

"kubernetes.io/os": linux

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule

volumes:

- name: tmp-volume

emptyDir: {}

定义角色的端口,设置3200端口(范围:30000-32767),此端口不要与NodePort一样

vi recommended.yaml

---

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort #使用NodePort方式,方便外网访问

ports:

- port: 443

targetPort: 8443

nodePort: 32000 #映射到host的32000端口

---

create管理员角色

[root@k8s01 ~]# kubectl create -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

#检查kubernetes-dashboard是否成功

[root@k8s01 ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

......

kubernetes-dashboard dashboard-metrics-scraper-c45b7869d-x9rc7 1/1 Running 0 111m

kubernetes-dashboard kubernetes-dashboard-576cb95f94-f68wh 1/1 Running 0 111m

#查看pod,service,角色的端口端口是否已经修改成功

[root@k8s01 ~]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.1.143.142 <none> 8000/TCP 114m

kubernetes-dashboard NodePort 10.1.59.150 <none> 443:32000/TCP 114m

生成Dashboard的认证令牌

[root@k8s01 ~]# kubectl create serviceaccount dashboard-admin -n kube-system

serviceaccount/dashboard-admin created

[root@k8s01 ~]# kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

clusterrolebinding.rbac.authorization.k8s.io/dashboard-admin created

[root@k8s01 ~]# kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

Name: dashboard-admin-token-4fkng

Namespace: kube-system

Labels: <none>

Annotations: kubernetes.io/service-account.name: dashboard-admin

kubernetes.io/service-account.uid: ef0ace5f-a043-419a-8754-985ae57630a9

Type: kubernetes.io/service-account-token

Data

====

ca.crt: 1099 bytes

namespace: 11 bytes

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IkdrUnh1dDAxU0lfZXd5ZTJfeVd1U0prckRnZTE5UWZZaVA3MzVXWkJ6RkEifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJkYXNoYm9hcmQtYWRtaW4tdG9rZW4tNGZrbmciLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC5uYW1lIjoiZGFzaGJvYXJkLWFkbWluIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQudWlkIjoiZWYwYWNlNWYtYTA0My00MTlhLTg3NTQtOTg1YWU1NzYzMGE5Iiwic3ViIjoic3lzdGVtOnNlcnZpY2VhY2NvdW50Omt1YmUtc3lzdGVtOmRhc2hib2FyZC1hZG1pbiJ9.p4A6zTnUC45FMbLadeE9goCd4d-YIswSkyDiD7X8E-zb6oBn5ANQvrbEq4MKCXLESCQF7b2H8EbqtwYRfRAWArU796MWWN3O1L5MohqWwH37x9fo2zYiiH9GUKCf62tHiAU6BR1WlRVURjdDfz2GnTpkmebSsPoFVmyNZ6WvRRUsmz3FKJZywDqWTKoso8Zl_nnDBNFWaCF08Z8YdCKqE67UtwIRSyHX1TN7BwQtmQHu7XLXv7fqI2WRLQVMGTu5ohCcGwbo2-OsnTbyZhmqL3OabyTaE-STJFYLh1k80L3nTTbDaRst7dxbSpl-ZmHQ-5zHLc-gd25NaTUzL2F1LQ

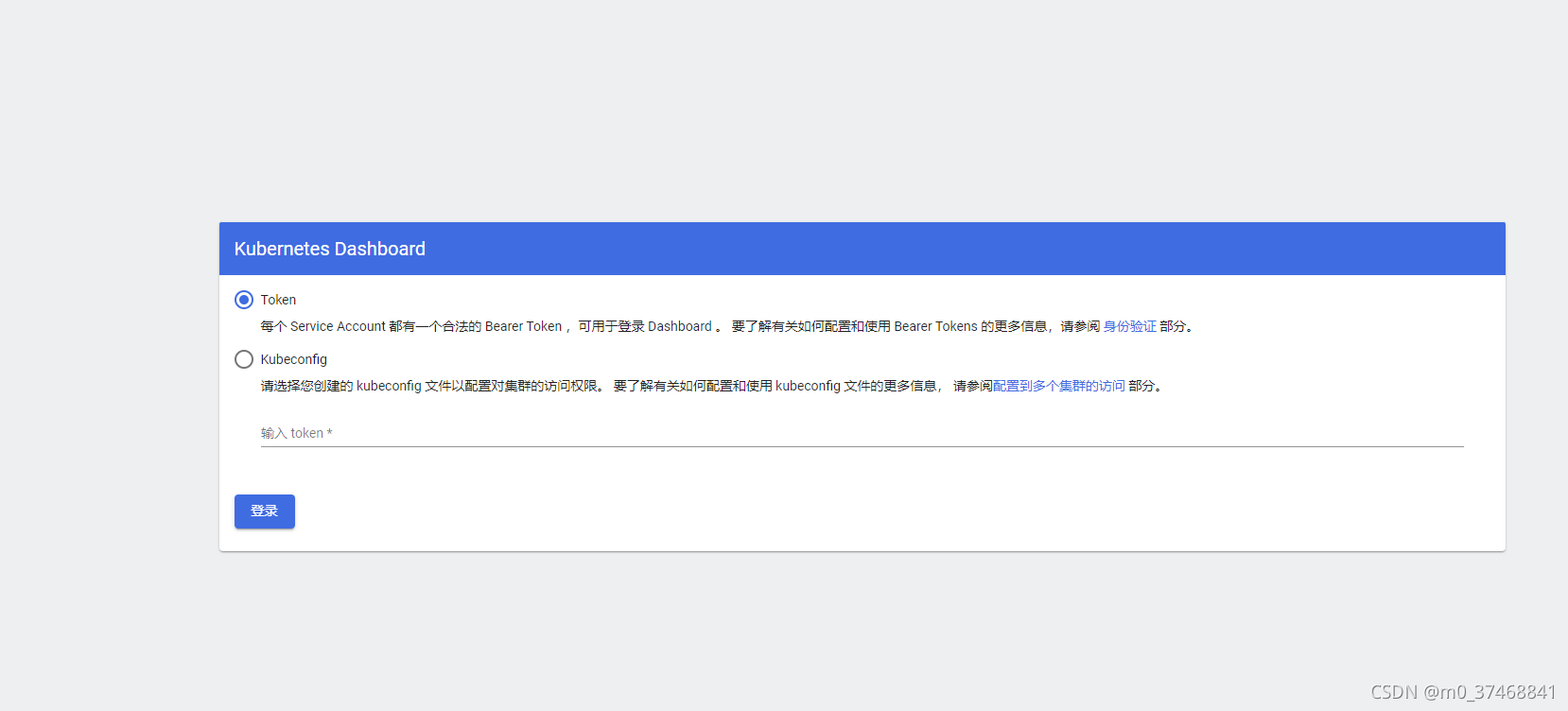

将生成的token保存,访问https://192.168.25.133:32000/,选择token认证方式登录,输入token

参考资料:

https://blog.csdn.net/xtss999/article/details/105061136

https://blog.csdn.net/weixin_41827162/article/details/117670165