配置lzo压缩

1. 为什么配置lzo压缩?

编译hadoop-lzo-0.4.20.jar

#Hadoop支持LZO

#环境准备

#maven(下载安装,配置环境变量,修改sitting.xml加阿里云镜像)

gcc-c++

zlib-devel

autoconf

automake

libtool

#通过yum安装即可

yum -y install gcc-c++ lzo-devel zlib-devel autoconf automake libtool

##1. 下载、安装并编译LZO

wget http://www.oberhumer.com/opensource/lzo/download/lzo-2.10.tar.gz

tar -zxvf lzo-2.10.tar.gz

cd lzo-2.10

./configure -prefix=/usr/local/hadoop/lzo/

make

make install

#2. 编译hadoop-lzo源码

#2.1 下载hadoop-lzo的源码,下载地址:https://github.com/twitter/hadoop-lzo/archive/master.zip

#2.2 解压之后,修改pom.xml

<hadoop.current.version>3.1.3</hadoop.current.version>

#2.3 声明两个临时环境变量

export C_INCLUDE_PATH=/usr/local/hadoop/lzo/include

export LIBRARY_PATH=/usr/local/hadoop/lzo/lib

#2.4 编译

进入hadoop-lzo-master,执行maven编译命令

mvn package -Dmaven.test.skip=true

#2.5 进入target,hadoop-lzo-0.4.21-SNAPSHOT.jar 即编译成功的hadoop-lzo组件

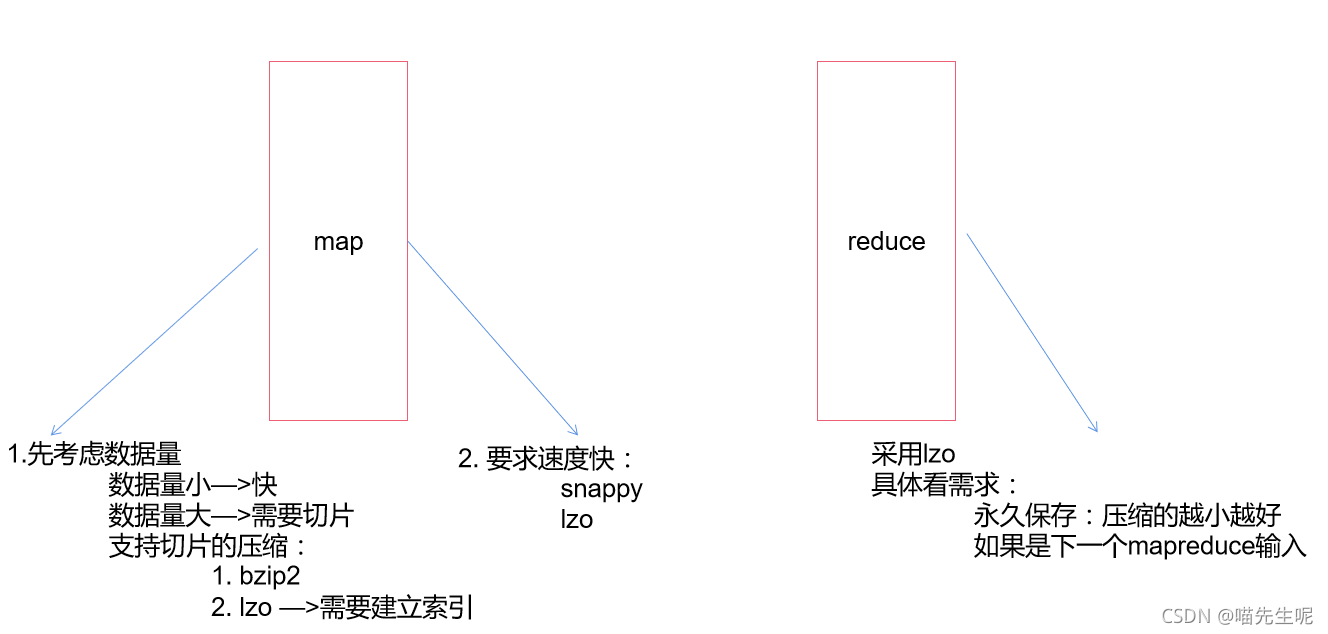

2. 配置lzo压缩的步骤

hadoop本身并不支持lzo压缩,故需要

使用twitter提供的hadoop-lzo开源组件。hadoop-lzo需依赖hadoop和lzo进行编译,编译步骤如下。

将编译好后的hadoop-lzo-0.4.20.jar 放入

hadoop-3.1.3/share/hadoop/common/同步hadoop-lzo-0.4.20.jar到hadoop104、hadoop105

配置core-site.xml增加配置支持LZO压缩

<configuration> <property> <name>io.compression.codecs</name> <value> org.apache.hadoop.io.compress.GzipCodec, org.apache.hadoop.io.compress.DefaultCodec, org.apache.hadoop.io.compress.BZip2Codec, org.apache.hadoop.io.compress.SnappyCodec, com.hadoop.compression.lzo.LzoCodec, com.hadoop.compression.lzo.LzopCodec </value> </property> <property> <name>io.compression.codec.lzo.class</name> <value>com.hadoop.compression.lzo.LzoCodec</value> </property> </configuration>同步core-site.xml到hadoop104、hadoop105

xsync core-site.xml启动及查看集群

sbin/start-dfs.sh sbin/start-dfs.sh

3. 使用lzo压缩—创建索引

创建LZO文件的索引,LZO压缩文件的可切片特性依赖于其索引,故我们需要手动为LZO压缩文件创建索引。若无索引,则LZO文件的切片只有一个。

将bigtable.lzo(200M)上传到集群的根目录

hadoop fs -mkdir /input hadoop fs -put bigtable.lzo /input执行wordcount程序

hadoop jar /opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar wordcount -Dmapreduce.job.inputformat.class=com.hadoop.mapreduce.LzoTextInputFormat /input /output1

对上传的LZO文件建索引

hadoop jar /opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-lzo-0.4.20.jar com.hadoop.compression.lzo.DistributedLzoIndexer /input/bigtable.lzo再次执行WordCount程序

hadoop jar /opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar wordcount -Dmapreduce.job.inputformat.class=com.hadoop.mapreduce.LzoTextInputFormat /input /output2

注意:如果以上任务,在运行过程中报如下异常

Container [pid=8468,containerID=container_1594198338753_0001_01_000002] is running 318740992B beyond the 'VIRTUAL' memory limit. Current usage: 111.5 MB of 1 GB physical memory used; 2.4 GB of 2.1 GB virtual memory used. Killing container. Dump of the process-tree for container_1594198338753_0001_01_000002 :解决办法:在hadoop103的/opt/module/hadoop-3.1.3/etc/hadoop/yarn-site.xml文件中增加如下配置,然后分发到hadoop104、hadoop105服务器上,并重新启动集群。

<!--是否启动一个线程检查每个任务正使用的物理内存量,如果任务超出分配值,则直接将其杀掉,默认是true --> <property> <name>yarn.nodemanager.pmem-check-enabled</name> <value>false</value> </property> <!--是否启动一个线程检查每个任务正使用的虚拟内存量,如果任务超出分配值,则直接将其杀掉,默认是true --> <property> <name>yarn.nodemanager.vmem-check-enabled</name> <value>false</value> </property>

☆

版权声明:本文为weixin_45267102原创文章,遵循CC 4.0 BY-SA版权协议,转载请附上原文出处链接和本声明。