一、前言

上一节介绍了 Kubernetes (k8s) 内网集群的搭建详细图解 ,也介绍了云服务器按量付费的租赁方式,但是这种方式有点不好之处就是,每次停机重启之后 , IP地址就变了,导致XShell 连接工具每次要改IP, 很是麻烦, 好在我这个人比较能薅羊毛,于是在腾讯云, 百度云, 阿里云上面都薅了一波羊毛。所以趁着休息,将手头的几台云服务器搭建成 k8s 集群,由于这几台云服务属于不同的云服务厂商,无法搭建局域网环境的 k8s 集群,故笔者搭建的是公网环境的 k8s 集群。

二、环境准备

我这边薅的羊毛配置如下:

| 云产商 | Ip地址 | 系统环境 | 配置 | 角色 |

|---|---|---|---|---|

| 腾讯云 | 101.34.253.57 | CentOS 8.0 | CPU: 4核 内存: 4GB 80GB SSD云硬盘 1200GB/月(带宽:8Mbps) | master |

| 阿里云 | 49.232.112.117 | CentOS 8.0 | CPU: 4核 内存: 4GB 80GB SSD云硬盘 1200GB/月(带宽:8Mbps) | node1 |

| 百度云 | 106.12.118.136 | CentOS 8.0 | CPU: 2核 内存: 4GB内存 80GB磁盘/6Mbps带宽 | node2 |

- 开启 6443 TCP端口:给 kube-apiserver 使用

- 开启 8472 UPD端口:给 flannel 网络插件通讯使用

- 开启TCP 30000-32767 范围的端口:NodePort 服务

- 每台机器都设置成不同的hostname

# 1、三台机器一次运行下面的命令, 设置不同的hostname # 语法格式:hostnamectl set-hostname xxx hostnamectl set-hostname master hostnamectl set-hostname node1 hostnamectl set-hostname node2 # 2、重启服务器 reboot

- 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0 sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

- 关闭swap

# 临时关闭swap分区,当前会话生效,重启失效 swapoff -a # 永久关闭swap分区 sed -ri 's/.*swap.*/#&/' /etc/fstab

- 允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf br_netfilter EOF cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-ip6tables = 1 net.bridge.bridge-nf-call-iptables = 1 EOF sudo sysctl --system

三、环境搭建

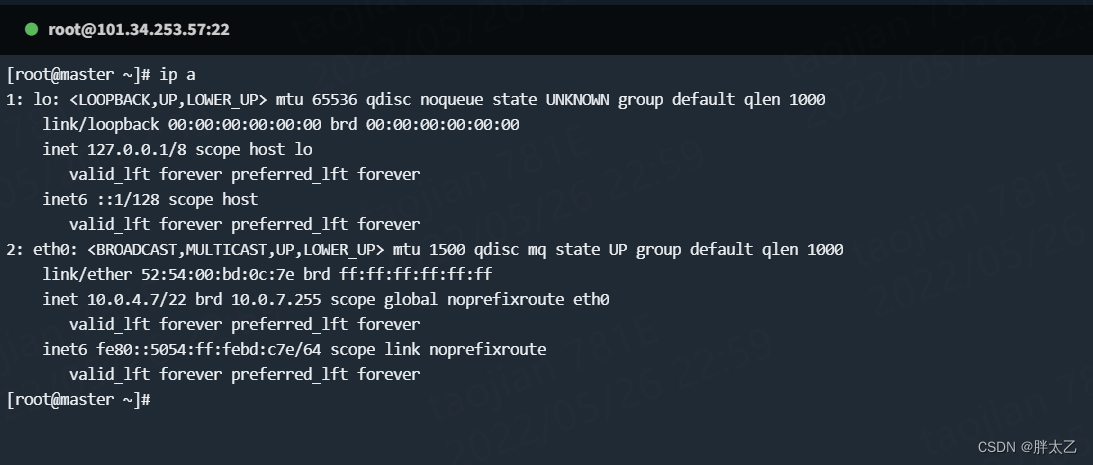

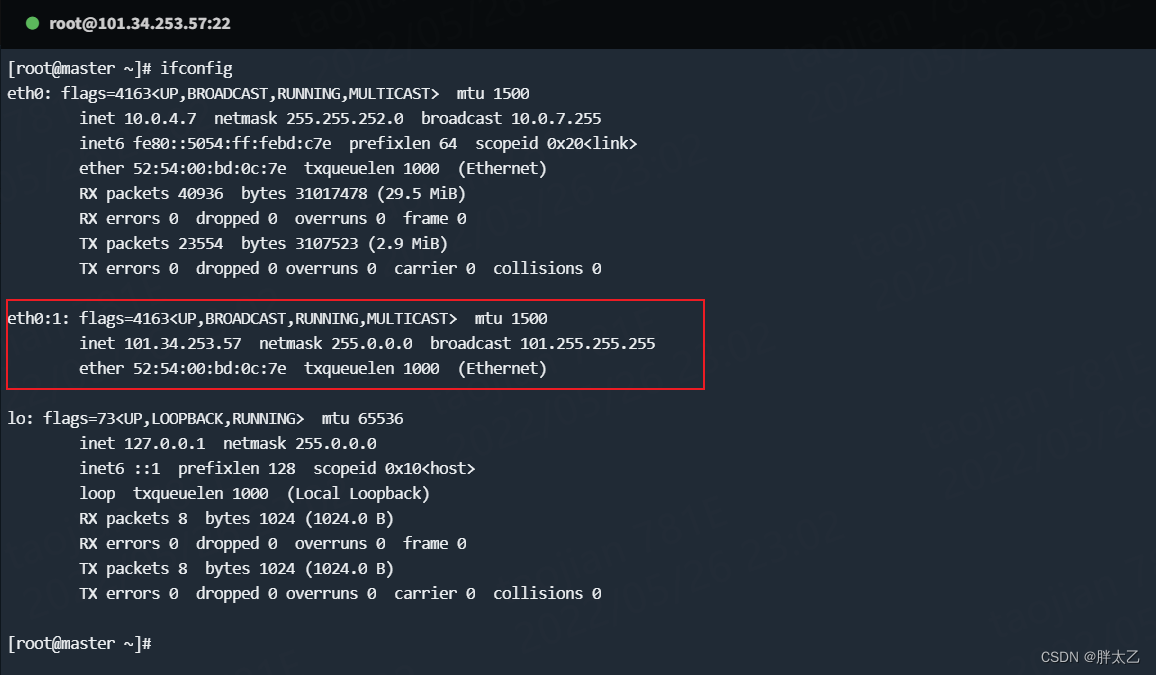

1、创建虚拟网卡

由于云主机网卡绑定的都是内网 IP, 而且几台云服务器位于不同的内网中(VPC网络不互通), 直接搭建会将内网 IP 注册进集群导致搭建不成功。

解决方案:使用虚拟网卡绑定公网 IP, 使用该公网 IP 来注册集群。

# 所有主机都要创建虚拟网卡,并绑定对应的公网 ip

# 临时生效,重启会失效

ifconfig eth0:1 <你的公网IP>

# 永久生效

cat > /etc/sysconfig/network-scripts/ifcfg-eth0:1 <<EOF

BOOTPROTO=static

DEVICE=eth0:1

IPADDR=<你的公网IP>

PREFIX=32

TYPE=Ethernet

USERCTL=no

ONBOOT=yes

EOF

2、安装Docker

这里就不在赘述了, 不知道如何安装的请看我的另外一篇博客

注:由于k8s 和 Docker 版本差异, 可能会产生错误。所以请使用 “yum install -y docker-ce-19.03.13 docker-ce-cli-19.03.13 containerd.io” 替换下述链接的第 8 步的命令。

Linux 下安装Docker图解教程![]() https://blog.csdn.net/IT_rookie_newbie/article/details/120687531

https://blog.csdn.net/IT_rookie_newbie/article/details/120687531

3、安装Kubernetes

3.1、安装kubelet、kubeadm、kubectl (每台机器都需要执行)

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

http://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

exclude=kubelet kubeadm kubectl

EOF

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9 --disableexcludes=kubernetes

sudo systemctl enable --now kubelet3.2、安装kubeadm引导集群(每台机器都需要执行)

sudo tee ./images.sh <<-'EOF'

#!/bin/bash

images=(

kube-apiserver:v1.20.9

kube-proxy:v1.20.9

kube-controller-manager:v1.20.9

kube-scheduler:v1.20.9

coredns:1.7.0

etcd:3.4.13-0

pause:3.2

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageName

done

EOF

chmod +x ./images.sh && ./images.sh3.3、修改kubelet启动参数文件(每台机器都需要执行)

# 此文件安装kubeadm后就存在了

vim /usr/lib/systemd/system/kubelet.service.d/10-kubeadm.conf

# 注意,这步很重要,如果不做,节点仍然会使用内网IP注册进集群

# 在末尾添加参数 --node-ip=公网IP

ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS --node-ip=<公网IP>3.4、添加配置文件(只在master 主节点执行)

# 添加配置文件,注意替换下面的IP

cat > kubeadm-config.yaml <<EOF

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

kubernetesVersion: v1.20.9

apiServer:

certSANs:

- master #请替换为hostname

- xx.xx.xx.xx #请替换为公网IP

- xx.xx.xx.xx #请替换为私网IP

- 10.96.0.1

controlPlaneEndpoint: xx.xx.xx.xx:6443 #替换为公网IP

imageRepository: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images

networking:

podSubnet: 10.244.0.0/16

serviceSubnet: 10.96.0.0/12

---

apiVersion: kubeproxy-config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

featureGates:

SupportIPVSProxyMode: true

mode: ipvs

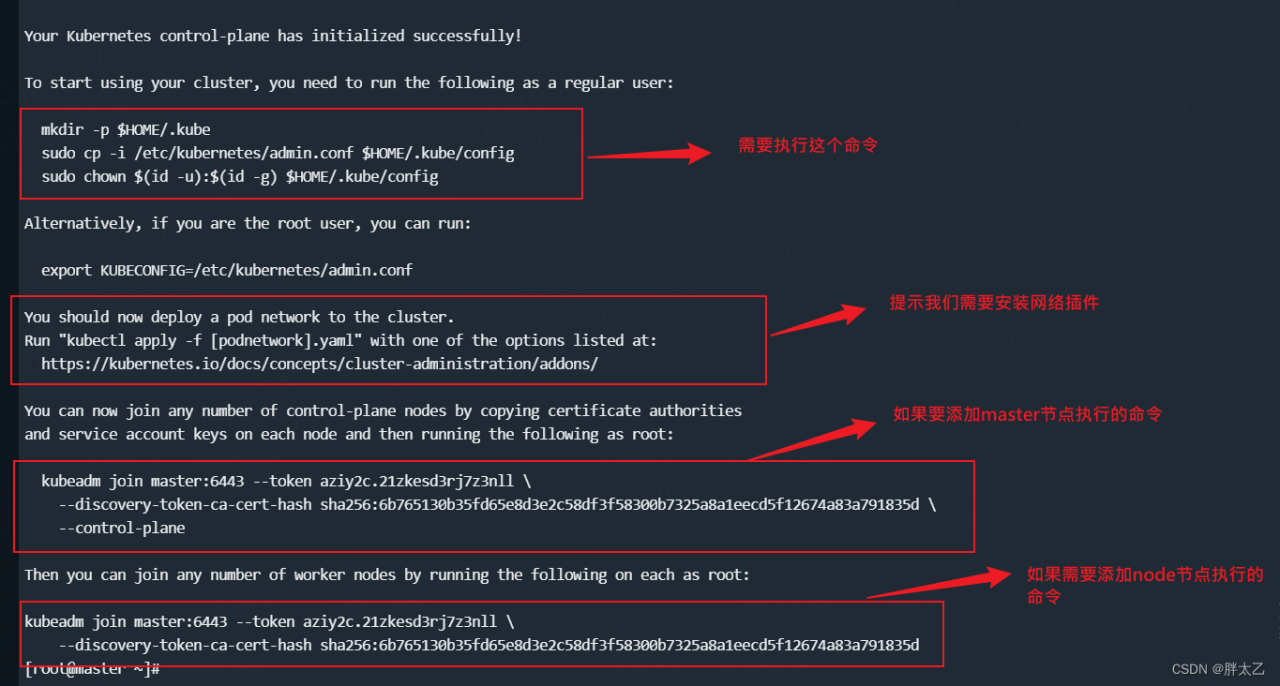

EOF3.5、初始化主节点(只在master 主节点执行)

kubeadm init --config=kubeadm-config.yaml

3.6、执行命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config3.7、修改kube-apiserver参数(master,只修改注释项,其余的不要动)

# 修改两个信息,添加--bind-address和修改--advertise-address

vim /etc/kubernetes/manifests/kube-apiserver.yaml

apiVersion: v1

kind: Pod

metadata:

annotations:

kubeadm.kubernetes.io/kube-apiserver.advertise-address.endpoint: 10.0.20.8:6443

creationTimestamp: null

labels:

component: kube-apiserver

tier: control-plane

name: kube-apiserver

namespace: kube-system

spec:

containers:

- command:

- kube-apiserver

- --advertise-address=xx.xx.xx.xx # 修改为公网IP

- --bind-address=0.0.0.0 # 新增参数

- --allow-privileged=true

- --authorization-mode=Node,RBAC

- --client-ca-file=/etc/kubernetes/pki/ca.crt

- --enable-admission-plugins=NodeRestriction

- --enable-bootstrap-token-auth=true

- --etcd-cafile=/etc/kubernetes/pki/etcd/ca.crt

- --etcd-certfile=/etc/kubernetes/pki/apiserver-etcd-client.crt

- --etcd-keyfile=/etc/kubernetes/pki/apiserver-etcd-client.key

- --etcd-servers=https://127.0.0.1:2379

- --insecure-port=0

- --kubelet-client-certificate=/etc/kubernetes/pki/apiserver-kubelet-client.crt

- --kubelet-client-key=/etc/kubernetes/pki/apiserver-kubelet-client.key

- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

- --proxy-client-cert-file=/etc/kubernetes/pki/front-proxy-client.crt

- --proxy-client-key-file=/etc/kubernetes/pki/front-proxy-client.key

- --requestheader-allowed-names=front-proxy-client

- --requestheader-client-ca-file=/etc/kubernetes/pki/front-proxy-ca.crt

- --requestheader-extra-headers-prefix=X-Remote-Extra-

- --requestheader-group-headers=X-Remote-Group

- --requestheader-username-headers=X-Remote-User

- --secure-port=6443

- --service-account-issuer=https://kubernetes.default.svc.cluster.local

- --service-account-key-file=/etc/kubernetes/pki/sa.pub

- --service-account-signing-key-file=/etc/kubernetes/pki/sa.key

- --service-cluster-ip-range=10.96.0.0/12

- --tls-cert-file=/etc/kubernetes/pki/apiserver.crt

- --tls-private-key-file=/etc/kubernetes/pki/apiserver.key

image: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/kube-apiserver:v1.20.9

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 8

httpGet:

host: 10.0.20.8

path: /livez

port: 6443

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

name: kube-apiserver

readinessProbe:

failureThreshold: 3

httpGet:

host: 10.0.20.8

path: /readyz

port: 6443

scheme: HTTPS

periodSeconds: 1

timeoutSeconds: 15

resources:

requests:

cpu: 250m

startupProbe:

failureThreshold: 24

httpGet:

host: 10.0.20.8

path: /livez

port: 6443

scheme: HTTPS

initialDelaySeconds: 10

periodSeconds: 10

timeoutSeconds: 15

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certs

readOnly: true

- mountPath: /etc/pki

name: etc-pki

readOnly: true

- mountPath: /etc/kubernetes/pki

name: k8s-certs

readOnly: true

hostNetwork: true

priorityClassName: system-node-critical

volumes:

- hostPath:

path: /etc/ssl/certs

type: DirectoryOrCreate

name: ca-certs

- hostPath:

path: /etc/pki

type: DirectoryOrCreate

name: etc-pki

- hostPath:

path: /etc/kubernetes/pki

type: DirectoryOrCreate

name: k8s-certs

status: {}3.8、安装flannel网络插件(只在master 主节点执行)

flanel Git地址:flannel

# 1、下载flannel配置文件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml修改 kube-flannel.yml内容,如下:

apiVersion: policy/v1beta1

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

- configMap

- secret

- emptyDir

- hostPath

allowedHostPaths:

- pathPrefix: "/etc/cni/net.d"

- pathPrefix: "/etc/kube-flannel"

- pathPrefix: "/run/flannel"

readOnlyRootFilesystem: false

# Users and groups

runAsUser:

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAny

# Privilege Escalation

allowPrivilegeEscalation: false

defaultAllowPrivilegeEscalation: false

# Capabilities

allowedCapabilities: ['NET_ADMIN', 'NET_RAW']

defaultAddCapabilities: []

requiredDropCapabilities: []

# Host namespaces

hostPID: false

hostIPC: false

hostNetwork: true

hostPorts:

- min: 0

max: 65535

# SELinux

seLinux:

# SELinux is unused in CaaSP

rule: 'RunAsAny'

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

rules:

- apiGroups: ['extensions']

resources: ['podsecuritypolicies']

verbs: ['use']

resourceNames: ['psp.flannel.unprivileged']

- apiGroups:

- ""

resources:

- pods

verbs:

- get

- apiGroups:

- ""

resources:

- nodes

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes/status

verbs:

- patch

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

- kind: ServiceAccount

name: flannel

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-system

---

kind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

"name": "cbr0",

"cniVersion": "0.3.1",

"plugins": [

{

"type": "flannel",

"delegate": {

"hairpinMode": true,

"isDefaultGateway": true

}

},

{

"type": "portmap",

"capabilities": {

"portMappings": true

}

}

]

}

net-conf.json: |

{

"Network": "10.244.0.0/16", # 这里是kubeadm-config.yaml配置的podsnetwork

"Backend": {

"Type": "vxlan"

}

}

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: kube-flannel-ds

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/os

operator: In

values:

- linux

hostNetwork: true

priorityClassName: system-node-critical

tolerations:

- operator: Exists

effect: NoSchedule

serviceAccountName: flannel

initContainers:

- name: install-cni

image: quay.io/coreos/flannel:v0.14.0

command:

- cp

args:

- -f

- /etc/kube-flannel/cni-conf.json

- /etc/cni/net.d/10-flannel.conflist

volumeMounts:

- name: cni

mountPath: /etc/cni/net.d

- name: flannel-cfg

mountPath: /etc/kube-flannel/

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.14.0

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

- --public-ip=$(PUBLIC_IP) # 新增

- --iface=eth0 # 新增

resources:

requests:

cpu: "100m"

memory: "50Mi"

limits:

cpu: "100m"

memory: "50Mi"

securityContext:

privileged: false

capabilities:

add: ["NET_ADMIN", "NET_RAW"]

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

- name: PUBLIC_IP #新增

valueFrom: #新增

fieldRef: #新增

fieldPath: status.podIP #新增

volumeMounts:

- name: run

mountPath: /run/flannel

- name: flannel-cfg

mountPath: /etc/kube-flannel/

volumes:

- name: run

hostPath:

path: /run/flannel

- name: cni

hostPath:

path: /etc/cni/net.d

- name: flannel-cfg

configMap:

name: kube-flannel-cfg创建网络插件

kubectl apply -f kube-flannel.yml3.9、将 node1 和 node2 节点加入工作节点

在工作节点(node1 , node2) 上执行上述初始化结果中的令牌内容

kubeadm join k8s-master:6443 --token kqi0e4.j8v7dg0qxx4i3re9 \

--discovery-token-ca-cert-hash sha256:b8b22ee57294e3c9e4bf09d40ec46dfd2fbb3b07287d512361380fb6b3da019a注:这个命令24小时内有效,如果超出了时间范围就执行 “kubeadm token create --print-join-command” ,重新生成一个令牌即可。

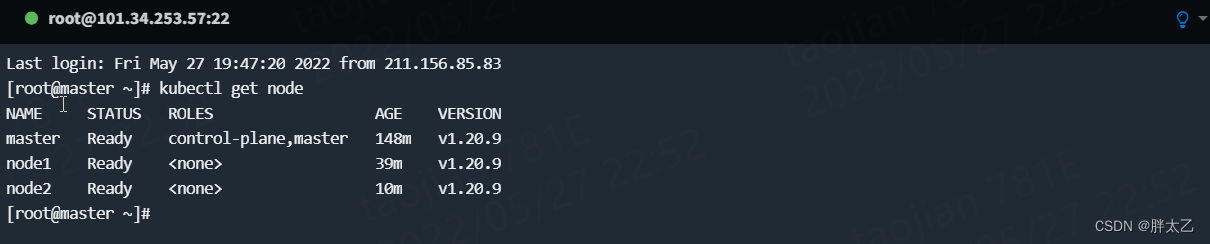

3.10、验证集群

在master 节点上使用 “kubectl get nodes” 来验证集群

如果端口 ping 不通,检查是否开启了“环境准备” 中提到的相关端口。

四、部署dashboard(只在master 主节点执行)

这里就不在赘述了, 可以去看我的另外一篇文章 : “二、Kubernetes (k8s) 内网集群的搭建详细图解_胖太乙的博客-CSDN博客”